Are you running irqbalance? Because that may help a lot. I can not only shape a gigabit, but I can do it over a squid proxy at the native clock freq. So there should be a lot of headroom on this device.

Yes, it is running. The issue is the SQM is single threaded. See this pic in post #7 of this thread.

It's strange because I managed to shape a gigabit with something like 10-15% of one core in the test thread. Now I was using hfsc but I don't expect cake to be 10x as cpu intense

@darksky Are you using snapshots?

There seems to be a regression in SQM performance lately:

I guess this will get fixed at some point, but in the meantime you can try an older version of sqm-scripts as cited, that should get you results more in line with @dlakelan's

Yes, built against HEAD. I guess I could downgrade to the commit from Dec of 2020.

If you do, please try to incrementally add the 3 commits between Dec 2020 and Feb 28 2021 to see which of these innocent looking changes is responsible for the observed CPU load.

Well, for fixing it we first need to locate the offending change and then understand why it fails... ![]()

My home router is still on release 19 (and I can not currently change that due to homeschooling/videoconferencing)

Well, there is really only one changing commit, the other is just updating the hash. So 1.51 is bad and we know from that other thread that 1.50 is good.

I'm thinking there is a repo hosting these packages? Is there one for rc1, rc2, etc. of 21.02.1?

EDIT:

https://downloads.openwrt.org/releases/21.02.0-rc1/targets/ipq806x/generic/packages/

Not sure where the sqm-scripts package is in that dirtree nor am I sure what deps it will need/if pulling it only would be a good idea... might be easier to just manually edit the files directly assuming those commits are changes to just the scripts/nothing compiled.

# opkg files sqm-scripts

Package sqm-scripts (1.5.1-1) is installed on root and has the following files:

/usr/lib/sqm/simplest.qos

/etc/hotplug.d/iface/11-sqm

/usr/lib/sqm/start-sqm

/usr/lib/sqm/run.sh

/etc/init.d/sqm

/usr/lib/sqm/update-available-qdiscs

/usr/lib/sqm/simple.qos.help

/usr/lib/sqm/piece_of_cake.qos

/usr/lib/sqm/layer_cake.qos.help

/usr/lib/sqm/simple.qos

/usr/lib/sqm/simplest_tbf.qos

/usr/lib/sqm/stop-sqm

/usr/lib/sqm/simplest_tbf.qos.help

/usr/lib/sqm/piece_of_cake.qos.help

/usr/lib/sqm/layer_cake.qos

/usr/lib/sqm/defaults.sh

/usr/lib/sqm/functions.sh

/etc/sqm/sqm.conf

/usr/lib/sqm/simplest.qos.help

/etc/config/sqm

EDIT2: Making this too difficult:

Yes, you can basically manually edit the files in /usr/lib/sqm as well as /etc/hotplug.d/iface/11-sqm to match the different versions of sqm scripts (which really is just a set of scripts to set-up a working sane qdisc combination to get competent AQM into the hand of normal users).

Just make a copy of the existing files on your router before you start editing. Personally on a router with enough space/ram I install both nano and midnight commander to make my life easier in editing files, but I a sure other's prefer the terse elegance of ed or vi instead.

Just to be explicit for others (you already figured it out), between version 1.5.0 and 1.5.1 there were only three commits in sqm-scripts, so testing these by manually editing the relevant files seems not too difficult.

I note that myself I am facing some issues with hotplug on my turris omnia (but that it with TOS which is based on OpenWrt 19.07.8) but these materialize in SQM instances not coming up at all or missing after hotplug events and not massive CPU cost increases.

Isn't the regression likely to be in the cake qdisc or the kernel rather than the scripts?

Yes, that would be the most likely way for SQM to break, but I think someone reported selectively downgrading SQM on the just released OpenWrt reduced the CPU load. Not sure about the root cause but it would help if one of the three commits in SQM could be shown to make a difference. Alas, I am in no position to really test that, so need to recruit volunteers...

I cannot explain why, but now, I am getting 38-40% CPU usage with fq_codel/simplest.qos shaping with 900000 kbit/s downstream and 24000 kbit/s upstream. Really good performance on the waveform bufferbloat test. Point is no where near saturation now. I did not change any SQM packages.

Hi,

this is my first post, so I'm sorry if this is the wrong place to ask but was this issue completely fixed by switching to the UE300 or is the change that's discussed here still needed?

I have a very similar setup, RPi4B with a UE300 currently running 21.02.0-rc4 and sqm-scripts 1.5.0-2. Since I specifically got it for running CAKE (for gaming) I'm not sure if I should wait until a fixed sqm-scripts package becomes available or upgrade to 21.02.0 stable and 1.5.1-1 right away.

I'd also volunteer to run some tests but I don't know if I'd be of much help since I'm not knowledgeable enough to just edit the files myself.

I have no explanation for the massive difference. The several things that changed including a new ethernet cable and physically relocating the RPi4 to a different room/plugging it into some pre-wired panels. I found cake on this hardware to be inferior to fq_codel/simplest in my case.

Cake does a bunch of stuff that the simplest script doesn't. If you don't need those things then the simplest script is fine. Mainly they have to do with load balancing across multiple IPs and such. For a relatively small deployment with fast internet it may be overkill.

I ran some tests using cake/layer cake and cake/piece of cake on my RPi4.

I found nearly identical results using cake or fq_codel. Average of 2 runs presented in table. Also, despite maxing out one core, cake preformed likely within error of the others.

| sqm | CPU limited? | sqm settings | unloaded latency ms | download latency ms | upload latency ms | download Mbps | upload Mbps |

|---|---|---|---|---|---|---|---|

| none | no | 18 | 21 | 10 | 942.1 | 24.1 | |

| simplest | no | 900k/24k | 18 | 3 | 1 | 857.9 | 23 |

| cake/layer cake | yes | 900k/24k | 17 | 4 | 1 | 828.7 | 22.9 |

| cake/piece of cake | yes | 900k/24k | 19 | 2 | 0 | 838.7 | 22.7 |

Please note that shaper settings are always gross rates, while speedtests report net throughput (also called goodput)

For a normal link layer (so not ATM) you can calculate the expected maximum goodput from shaper gross rate and per-paket_over-head setting as follows:

gross_shaper_rate * ((packet_size - Internet_layer_overhead - Transport_layer_overhead - Application_layer_overhead) / (packet_size + SQM_overhead))

Application_layer_overhead is often not well known, but for on-line speedtests the HTTP/HTTPS encapsulation will be relative small and can be ignored.

So in your case we fill in the numbers (partly by guess-work):

paket_size: for a speedtest it will be mostly as large as possible which means ~1500 Bytes

Internet_layer_overhead: for IPv4 that is 20B, for IPv6 40B

Transport_layer_overhead: for an online speedtest almost invariably TCP (until QUIC will take hold), probably without TCP options, but if there are options it most likely be TCP timestamps (rfc1323) 12B

Application_layer_overhead: relative little spread over a number of packets, so we just ignore that: "0B"

SQM_overhead: what ever you configured, and if you configured nothing it will be the kernel's default of 14B, since I have no clue what you configured, but simply assuming that on a Gbps-link your ISP uses true ethernet without a vlan tag, we just assume 38B

900 * ((1500 - 20 - 20 - 0)/(1500 + 38)) = 854.36 Mbps

Given the uncertainties in my calculations, all three results seem reasonably close to the theoretical limit.

I should note an observation: placing SQM on a bridge interface (br-wan) uses much more CPU than putting it on the physical interface (eth1). I get the same good results with much less CPU use.

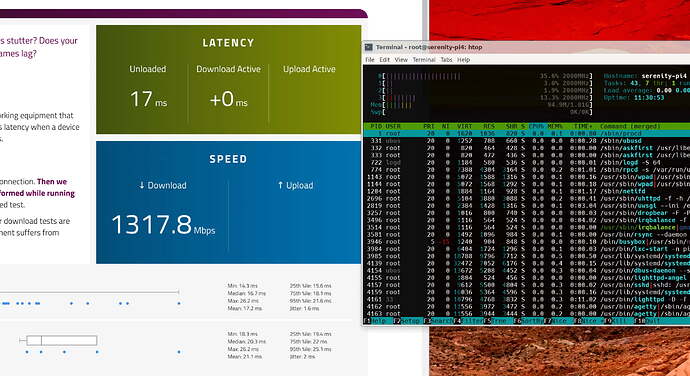

RPi4 (cake/piece_of_cake.qos depicted in screenshot) routing gigalan traffic without breaking a sweat. Screenshot is the waveform bufferbloat test.

- Peak CPU usage was <64% (on single core)

- Average CPU usage during 22 sec download test was 42.5% (on single core)

This topic was automatically closed 10 days after the last reply. New replies are no longer allowed.