Okay I've a really good news to share with you all ![]()

So I'm using Raspberry Pi4 and have 10 MBPS internet plan, fiber connection. But sometimes I get 96 MBPS on google services like YoutTube, drive and even some sites like Amazon. I can't apply cake SQM like the old days without getting a bit lag in hitreg/ browsing/streaming.

Well today this is changed. Yesterday I installed snapshot with Qosify + DSCP tags with the help of my friend. Also included CAKE /w Adaptive Bandwidth script. Now I'm getting mind blowing speed with really "impressive" hitreg under bulk download with no ping fluctuation.

9-10 devices were connected most of them were streaming netflix/youtube. Started downloading a file on IDM on PC with 2 max connections started browsing sites and everything is "instant", pages don't even take more than two seconds to load completely. This setup took SQM to whole new level. At the same time I started playing Call of duty in busy server hours. Joined ranked and the whole gameplay was superb. I was never this impress in last one year. I'm thankful for all your help and dedication. I'll share my scripts here:

/etc/config/qosify:

config defaults

list defaults /etc/qosify/*.conf

option dscp_icmp +besteffort

option dscp_default_tcp default_class

option dscp_default_udp default_class

config class default_class

option ingress CS1

option egress CS1

option prio_max_avg_pkt_len 1270

option dscp_prio CS4

option bulk_trigger_pps 600

option bulk_trigger_timeout 10

option dscp_bulk CS1

config class browsing

option ingress CS0

option egress CS0

option prio_max_avg_pkt_len 575

option dscp_prio AF41

option bulk_trigger_pps 400

option bulk_trigger_timeout 10

option dscp_bulk CS1

config class bulk

option ingress CS1

option egress CS1

config class besteffort

option ingress CS0

option egress CS0

config class network_services

option ingress CS2

option egress CS2

config class broadcast_video

option ingress CS3

option egress CS3

config class gaming

option ingress CS4

option egress CS4

config class multimedia_conferencing

option ingress AF42

option egress AF42

option prio_max_avg_pkt_len 575

option dscp_prio AF41

config class telephony

option ingress EF

option egress EF

config interface wan

option name wan

option disabled 0

option bandwidth_up 10mbit

option bandwidth_down 10mbit

option overhead_type none

# defaults:

option ingress 1

option egress 1

option mode diffserv4

option nat 1

option host_isolate 1

option autorate_ingress 0

option ingress_options ""

option egress_options ""

option options ""

config device wandev

option disabled 1

option name wan

option bandwidth 100mbit

/etc/qosify/00-defaults.conf:

# SSH

tcp:22 network_services

# NTP

udp:123 network_services

# DNS

tcp:53 network_services

tcp:5353 network_services

udp:53 network_services

udp:5353 network_services

# DNS over TLS (DoT)

tcp:853 multimedia_conferencing

udp:853 multimedia_conferencing

# HTTP/HTTPS/QUIC

tcp:80 browsing

tcp:443 browsing

udp:80 browsing

udp:443 browsing

# Microsoft (Download)

dns:*1drv* bulk

dns:*backblaze* bulk

dns:*backblazeb2* bulk

dns:*ms-acdc.office* bulk

dns:*onedrive* bulk

dns:*sharepoint* bulk

dns:*update.microsoft* bulk

dns:*windowsupdate* bulk

# MEGA (Download)

dns:*mega* bulk

# Dropbox (Download)

dns:*dropboxusercontent* bulk

# Google (Download)

dns:*drive.google* bulk

dns:*googleusercontent* bulk

# Steam (Download)

dns:*steamcontent* bulk

# Epic Games (Download)

dns:*download.epicgames* bulk

dns:*download2.epicgames* bulk

dns:*download3.epicgames* bulk

dns:*download4.epicgames* bulk

dns:*epicgames-download1* bulk

# YouTube

dns:*googlevideo* besteffort

# Facebook

dns:*fbcdn* besteffort

# Twitch

dns:*ttvnw* besteffort

# TikTok

dns:*tiktok* besteffort

# Netflix

dns:*nflxvideo* besteffort

# Amazon Prime Video

dns:*aiv-cdn* besteffort

dns:*aiv-delivery* besteffort

dns:*pv-cdn* besteffort

# Disney Plus

dns:*disney* besteffort

dns:*dssott* besteffort

# HBO

dns:*hbo* besteffort

dns:*hbomaxcdn* besteffort

# BitTorrent

tcp:6881-7000 bulk

tcp:51413 bulk

udp:6771 bulk

udp:6881-7000 bulk

udp:51413 bulk

# Usenet

tcp:119 bulk

tcp:563 bulk

# Live Streaming to YouTube Live, Twitch, Vimeo and LinkedIn Live

tcp:1935-1936 broadcast_video

tcp:2396 broadcast_video

tcp:2935 broadcast_video

# Xbox

tcp:3074 gaming

udp:88 gaming

#udp:500 gaming # UDP port already used in "VoWiFi" rules

udp:3074 gaming

udp:3544 gaming

#udp:4500 gaming # UDP port already used in "VoWiFi" rules

# PlayStation

tcp:3478-3480 gaming

#udp:3478-3479 gaming # UDP ports already used in "Zoom" rules

# Call of Duty

#tcp:3074 gaming # TCP port already used in "Xbox" rules

tcp:3075-3076 gaming

#udp:3074 gaming # UDP port already used in "Xbox" rules

udp:3075-3079 gaming

udp:7500-7700 gaming

udp:3658 gaming

# FIFA

tcp:3659 gaming

udp:3659 gaming

# Minecraft

tcp:25565 gaming

udp:19132-19133 gaming

udp:25565 gaming

# Zoom, Microsoft Teams, Skype and FaceTime (they use these same ports)

udp:3478-3497 multimedia_conferencing

# Zoom

dns:*zoom* multimedia_conferencing

tcp:8801-8802 multimedia_conferencing

udp:8801-8810 multimedia_conferencing

# Skype

dns:*skype* multimedia_conferencing

# FaceTime

udp:16384-16387 multimedia_conferencing

udp:16393-16402 multimedia_conferencing

# GoToMeeting

udp:1853 multimedia_conferencing

udp:8200 multimedia_conferencing

# Webex Meeting

tcp:5004 multimedia_conferencing

udp:9000 multimedia_conferencing

# Jitsi Meet

tcp:5349 multimedia_conferencing

udp:10000 multimedia_conferencing

# Google Meet

udp:19302-19309 multimedia_conferencing

# TeamViewer

tcp:5938 multimedia_conferencing

udp:5938 multimedia_conferencing

# Voice over Internet Protocol (VoIP)

tcp:5060-5061 telephony

udp:5060-5061 telephony

# Voice over WiFi or WiFi Calling (VoWiFi)

udp:500 telephony

udp:4500 telephony

/root/sqm-autorate.sh:

#!/bin/sh

# automatically adjust bandwidth for CAKE in dependence on detected load and RTT

# inspired by @moeller0 (OpenWrt forum)

# initial sh implementation by @Lynx (OpenWrt forum)

# requires packages: iputils-ping, coreutils-date and coreutils-sleep

debug=1

enable_verbose_output=1 # enable (1) or disable (0) output monitoring lines showing bandwidth changes

ul_if=pppoe-wan # upload interface

dl_if=ifb-pppoe-wan # download interface

max_ul_rate=88000 # maximum bandwidth for upload

min_ul_rate=9800 # minimum bandwidth for upload

max_dl_rate=88000 # maximum bandwidth for download

min_dl_rate=9800 # minimum bandwidth for download

tick_duration=1 # seconds to wait between ticks

alpha_RTT_increase=0.001 # how rapidly baseline RTT is allowed to increase

alpha_RTT_decrease=0.9 # how rapidly baseline RTT is allowed to decrease

rate_adjust_RTT_spike=0.05 # how rapidly to reduce bandwidth upon detection of bufferbloat

rate_adjust_load_high=0.005 # how rapidly to increase bandwidth upon high load detected

rate_adjust_load_low=0.0025 # how rapidly to decrease bandwidth upon low load detected

load_thresh=0.5 # % of currently set bandwidth for detecting high load

max_delta_RTT=3 # increase from baseline RTT for detection of bufferbloat

# verify these are correct using 'cat /sys/class/...'

case "${dl_if}" in

\veth*)

rx_bytes_path="/sys/class/net/${dl_if}/statistics/tx_bytes"

;;

\ifb*)

rx_bytes_path="/sys/class/net/${dl_if}/statistics/tx_bytes"

;;

*)

rx_bytes_path="/sys/class/net/${dl_if}/statistics/rx_bytes"

;;

esac

case "${ul_if}" in

\veth*)

tx_bytes_path="/sys/class/net/${ul_if}/statistics/rx_bytes"

;;

\ifb*)

tx_bytes_path="/sys/class/net/${ul_if}/statistics/rx_bytes"

;;

*)

tx_bytes_path="/sys/class/net/${ul_if}/statistics/tx_bytes"

;;

esac

if [ "$debug" ] ; then

echo "rx_bytes_path: $rx_bytes_path"

echo "tx_bytes_path: $tx_bytes_path"

fi

# list of reflectors to use

read -d '' reflectors << EOF

1.1.1.1

1.0.0.1

EOF

RTTs=$(mktemp)

# get minimum RTT across entire set of reflectors

get_RTT() {

for reflector in $reflectors;

do

echo $(/usr/bin/ping -i 0.00 -c 10 $reflector | tail -1 | awk '{print $4}' | cut -d '/' -f 1) >> $RTTs&

done

wait

RTT=$(echo $(cat $RTTs) | awk 'min=="" || $1 < min {min=$1} END {print min}')

> $RTTs

}

call_awk() {

printf '%s' "$(awk 'BEGIN {print '"${1}"'}')"

}

get_next_shaper_rate() {

local cur_delta_RTT

local cur_max_delta_RTT

local cur_rate

local cur_rate_adjust_RTT_spike

local cur_max_rate

local cur_min_rate

local cur_load

local cur_load_thresh

local cur_rate_adjust_load_high

local cur_rate_adjust_load_low

local next_rate

cur_delta_RTT=$1

cur_max_delta_RTT=$2

cur_rate=$3

cur_rate_adjust_RTT_spike=$4

cur_max_rate=$5

cur_min_rate=$6

cur_load=$7

cur_load_thresh=$8

cur_rate_adjust_load_high=$9

cur_rate_adjust_load_low=${10}

# in case of supra-threshold RTT spikes decrease the rate unconditionally

if awk "BEGIN {exit !($cur_delta_RTT >= $cur_max_delta_RTT)}"; then

next_rate=$( call_awk "int(${cur_rate} - ${cur_rate_adjust_RTT_spike} * (${cur_max_rate} - ${cur_min_rate}) )" )

else

# ... otherwise take the current load into account

# high load, so we would like to increase the rate

if awk "BEGIN {exit !($cur_load >= $cur_load_thresh)}"; then

next_rate=$( call_awk "int(${cur_rate} + ${cur_rate_adjust_load_high} * (${cur_max_rate} - ${cur_min_rate}) )" )

else

# low load gently decrease the rate again

next_rate=$( call_awk "int(${cur_rate} - ${cur_rate_adjust_load_low} * (${cur_max_rate} - ${cur_min_rate}) )" )

fi

fi

# make sure to only return rates between cur_min_rate and cur_max_rate

if awk "BEGIN {exit !($next_rate < $cur_min_rate)}"; then

next_rate=$cur_min_rate;

fi

if awk "BEGIN {exit !($next_rate > $cur_max_rate)}"; then

next_rate=$cur_max_rate;

fi

echo "${next_rate}"

}

# update download and upload rates for CAKE

function update_rates {

cur_rx_bytes=$(cat $rx_bytes_path)

cur_tx_bytes=$(cat $tx_bytes_path)

t_cur_bytes=$(date +%s.%N)

rx_load=$( call_awk "(8/1000)*(${cur_rx_bytes} - ${prev_rx_bytes}) / (${t_cur_bytes} - ${t_prev_bytes}) * (1/${cur_dl_rate}) " )

tx_load=$( call_awk "(8/1000)*(${cur_tx_bytes} - ${prev_tx_bytes}) / (${t_cur_bytes} - ${t_prev_bytes}) * (1/${cur_ul_rate}) " )

t_prev_bytes=$t_cur_bytes

prev_rx_bytes=$cur_rx_bytes

prev_tx_bytes=$cur_tx_bytes

# calculate the next rate for dl and ul

cur_dl_rate=$( get_next_shaper_rate "$delta_RTT" "$max_delta_RTT" "$cur_dl_rate" "$rate_adjust_RTT_spike" "$max_dl_rate" "$min_dl_rate" "$rx_load" "$load_thresh" "$rate_adjust_load_high" "$rate_adjust_load_low" )

cur_ul_rate=$( get_next_shaper_rate "$delta_RTT" "$max_delta_RTT" "$cur_ul_rate" "$rate_adjust_RTT_spike" "$max_ul_rate" "$min_ul_rate" "$tx_load" "$load_thresh" "$rate_adjust_load_high" "$rate_adjust_load_low" )

if [ $enable_verbose_output -eq 1 ]; then

printf "%s;%14.2f;%14.2f;%14.2f;%14.2f;%14.2f;%14.2f;%14.2f;\n" $( date "+%Y%m%dT%H%M%S.%N" ) $rx_load $tx_load $baseline_RTT $RTT $delta_RTT $cur_dl_rate $cur_ul_rate

fi

}

get_baseline_RTT() {

local cur_RTT

local cur_delta_RTT

local last_baseline_RTT

local cur_alpha_RTT_increase

local cur_alpha_RTT_decrease

local cur_baseline_RTT

cur_RTT=$1

cur_delta_RTT=$2

last_baseline_RTT=$3

cur_alpha_RTT_increase=$4

cur_alpha_RTT_decrease=$5

if awk "BEGIN {exit !($cur_delta_RTT >= 0)}"; then

cur_baseline_RTT=$( call_awk "( 1 - ${cur_alpha_RTT_increase} ) * ${last_baseline_RTT} + ${cur_alpha_RTT_increase} * ${cur_RTT} " )

else

cur_baseline_RTT=$( call_awk "( 1 - ${cur_alpha_RTT_decrease} ) * ${last_baseline_RTT} + ${cur_alpha_RTT_decrease} * ${cur_RTT} " )

fi

echo "${cur_baseline_RTT}"

}

# set initial values for first run

get_RTT

baseline_RTT=$RTT;

cur_dl_rate=$min_dl_rate

cur_ul_rate=$min_ul_rate

# set the next different from the cur_XX_rates so that on the first round we are guaranteed to call tc

last_dl_rate=0

last_ul_rate=0

t_prev_bytes=$(date +%s.%N)

prev_rx_bytes=$(cat $rx_bytes_path)

prev_tx_bytes=$(cat $tx_bytes_path)

if [ $enable_verbose_output -eq 1 ]; then

printf "%25s;%14s;%14s;%14s;%14s;%14s;%14s;%14s;\n" "log_time" "rx_load" "tx_load" "baseline_RTT" "RTT" "delta_RTT" "cur_dl_rate" "cur_ul_rate"

fi

# main loop runs every tick_duration seconds

while true

do

t_start=$(date +%s.%N)

get_RTT

delta_RTT=$( call_awk "${RTT} - ${baseline_RTT}" )

baseline_RTT=$( get_baseline_RTT "$RTT" "$delta_RTT" "$baseline_RTT" "$alpha_RTT_increase" "$alpha_RTT_decrease" )

update_rates

# only fire up tc if there are rates to change...

if [ "$last_dl_rate" -ne "$cur_dl_rate" ] ; then

#echo "tc qdisc change root dev ${dl_if} cake bandwidth ${cur_dl_rate}Kbit"

tc qdisc change root dev ${dl_if} cake bandwidth ${cur_dl_rate}Kbit

fi

if [ "$last_ul_rate" -ne "$cur_ul_rate" ] ; then

#echo "tc qdisc change root dev ${ul_if} cake bandwidth ${cur_ul_rate}Kbit"

tc qdisc change root dev ${ul_if} cake bandwidth ${cur_ul_rate}Kbit

fi

# remember the last rates

last_dl_rate=$cur_dl_rate

last_ul_rate=$cur_ul_rate

t_end=$(date +%s.%N)

sleep_duration=$( call_awk "${tick_duration} - ${t_end} + ${t_start}" )

if awk "BEGIN {exit !($sleep_duration > 0)}"; then

sleep $sleep_duration

fi

done

Yes I know the whole setup isn't perfect there might be mistakes but this is just one day test and I'm happy with it. Also any of you guys want to correct the above scripts or ask questions you are welcome

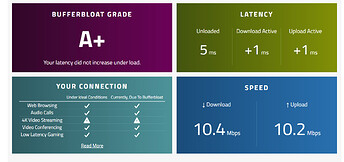

Edit: removed overhead values since I don't see any difference. Removed "+" from browsing rules. Here is my bufferbloat test: