Just for reference, I'm currently running OpenWrt/ SQM on an eight year old ivy-bridge era dual-core Celeron 1037u (4 GB RAM, 500 GB SSHD, 2* Intel 82574L (e1000e) ethernet), which can route/ NAT/ firewall and do SQM easily at full 1 GBit/s wirespeed (~53% CPU load on one core, the other idles around 14%-20%). It's totally bored handling my real WAN connection (400/200 MBit/s) and rarely even clocks up beyond 800 MHz; ~15 watts idle, 22-25 watts under full load (kernel compile/ ffmpeg).

Only because I've done it like a dozen times (and felt suitably stupid afterward)....I see you are running SQM on your eth4 interface. This (eth4) is your WAN interface, correct? If it is not, try selecting your WAN interface, whichever one that might be. Works much better that way

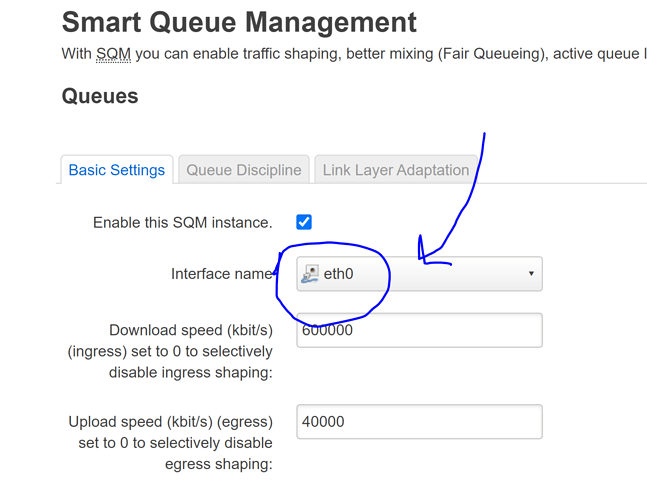

Yeah i looked in to that many times. I actually changed it to eth0, because someone mentioned somewhere else the scripts were hardcoded with eth0. not sure if its true

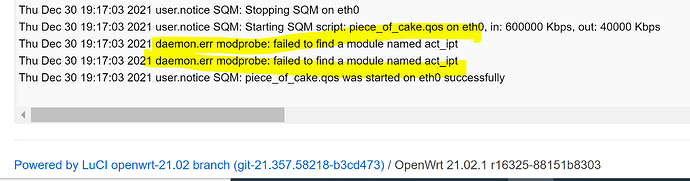

I re-enabled cake and this is the system logs, not sure what the error is....

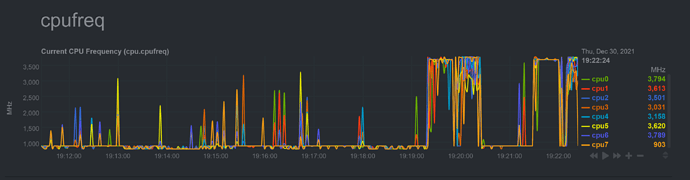

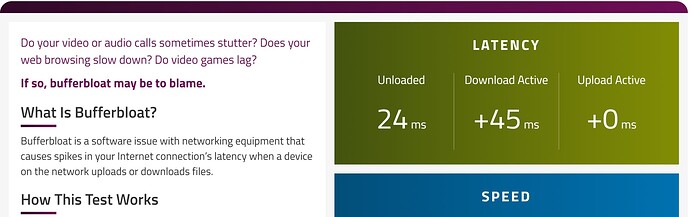

Here is the CPU frequency when running waveform bufferbloat test

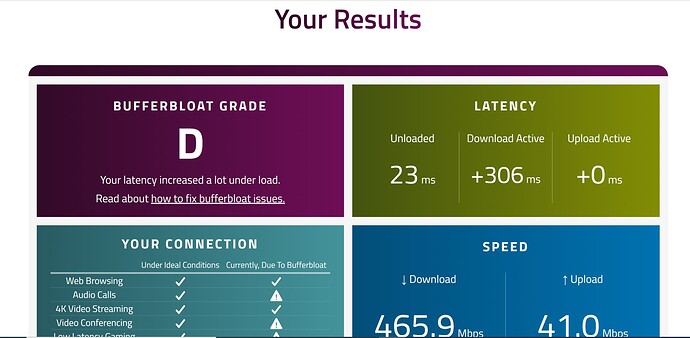

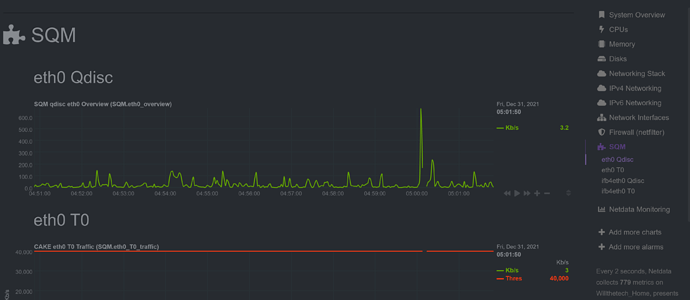

and here is the result with cake enabled...that's very bad

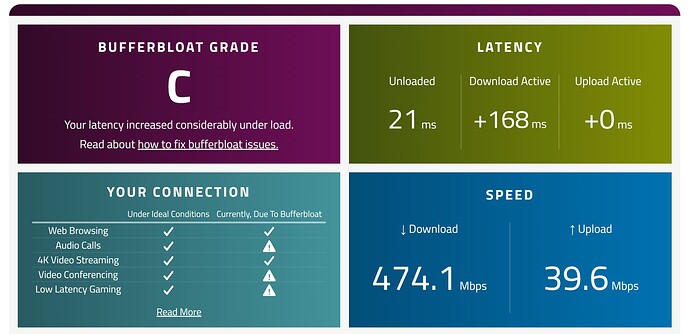

same test with fqcodel simple qos

system logs with fqcodel...

I think i am giving gup lol!

Don't give up! I know this is frustrating in the present moment, but seriously, CAKE is so good when it's working.

As to your act_ipt question, it doesn't sound like that's the issue here. I'm basing that on this post:

Would you mind posting your /etc/config/sqm file again in its entirety?

Also, are you building your OpenWrt image yourself or downloading a pre-built image? (Sorry if I missed this previously)

I am using an image, I choose the latest available...

Openwrt version generic-ext4-combined-efi.img.gz

here is the info

root@Willthetech_Home:~# cat /etc/config/sqm

config queue 'eth1'

option debug_logging '0'

option verbosity '5'

option enabled '1'

option interface 'eth0'

option linklayer 'none'

option download '600000'

option upload '40000'

option qdisc 'cake'

option script 'piece_of_cake.qos'

root@Willthetech_Home:~# tc -s qdisc

qdisc noqueue 0: dev lo root refcnt 2

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

qdisc cake 8009: dev eth0 root refcnt 9 bandwidth 40Mbit besteffort triple-isolate nonat nowash no-ack-filter split-gso rtt 100ms raw overhead 0

Sent 175330683 bytes 819991 pkt (dropped 373, overlimits 1316669 requeues 0)

backlog 0b 0p requeues 0

memory used: 183100b of 4Mb

capacity estimate: 40Mbit

min/max network layer size: 42 / 1514

min/max overhead-adjusted size: 42 / 1514

average network hdr offset: 14

Tin 0

thresh 40Mbit

target 5ms

interval 100ms

pk_delay 1.46ms

av_delay 207us

sp_delay 2us

backlog 0b

pkts 820364

bytes 175870869

way_inds 1141

way_miss 1195

way_cols 0

drops 373

marks 0

ack_drop 0

sp_flows 1

bk_flows 1

un_flows 0

max_len 21810

quantum 1220

qdisc ingress ffff: dev eth0 parent ffff:fff1 ----------------

Sent 2085107885 bytes 1493796 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

qdisc mq 0: dev eth1 root

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

qdisc fq_codel 0: dev eth1 parent :8 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth1 parent :7 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth1 parent :6 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth1 parent :5 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth1 parent :4 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth1 parent :3 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth1 parent :2 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth1 parent :1 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc mq 0: dev eth2 root

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

qdisc fq_codel 0: dev eth2 parent :8 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth2 parent :7 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth2 parent :6 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth2 parent :5 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth2 parent :4 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth2 parent :3 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth2 parent :2 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth2 parent :1 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

maxpacket 0 drop_overlimit 0 new_flow_count 0 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc mq 0: dev eth3 root

Sent 2142684821 bytes 1468975 pkt (dropped 0, overlimits 0 requeues 78)

backlog 0b 0p requeues 78

qdisc fq_codel 0: dev eth3 parent :8 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 1493812 bytes 16842 pkt (dropped 0, overlimits 0 requeues 4)

backlog 0b 0p requeues 4

maxpacket 1514 drop_overlimit 0 new_flow_count 1 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth3 parent :7 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 483665269 bytes 328119 pkt (dropped 0, overlimits 0 requeues 9)

backlog 0b 0p requeues 9

maxpacket 1514 drop_overlimit 0 new_flow_count 3 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth3 parent :6 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 339703052 bytes 225926 pkt (dropped 0, overlimits 0 requeues 18)

backlog 0b 0p requeues 18

maxpacket 1514 drop_overlimit 0 new_flow_count 11 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth3 parent :5 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 213609624 bytes 149979 pkt (dropped 0, overlimits 0 requeues 9)

backlog 0b 0p requeues 9

maxpacket 1514 drop_overlimit 0 new_flow_count 3 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth3 parent :4 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 337643403 bytes 223769 pkt (dropped 0, overlimits 0 requeues 12)

backlog 0b 0p requeues 12

maxpacket 1514 drop_overlimit 0 new_flow_count 5 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth3 parent :3 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 450300751 bytes 306134 pkt (dropped 0, overlimits 0 requeues 13)

backlog 0b 0p requeues 13

maxpacket 1514 drop_overlimit 0 new_flow_count 5 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth3 parent :2 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 153653553 bytes 102481 pkt (dropped 0, overlimits 0 requeues 5)

backlog 0b 0p requeues 5

maxpacket 107 drop_overlimit 0 new_flow_count 1 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc fq_codel 0: dev eth3 parent :1 limit 10240p flows 1024 quantum 1514 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

Sent 162615357 bytes 115725 pkt (dropped 0, overlimits 0 requeues 8)

backlog 0b 0p requeues 8

maxpacket 1514 drop_overlimit 0 new_flow_count 2 ecn_mark 0

new_flows_len 0 old_flows_len 0

qdisc noqueue 0: dev br-lan root refcnt 2

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

qdisc noqueue 0: dev docker0 root refcnt 2

Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

qdisc cake 800a: dev ifb4eth0 root refcnt 2 bandwidth 600Mbit besteffort triple-isolate nonat wash no-ack-filter split-gso rtt 100ms raw overhead 0

Sent 2144263891 bytes 1493013 pkt (dropped 783, overlimits 1673299 requeues 0)

backlog 0b 0p requeues 0

memory used: 13816701b of 15140Kb

capacity estimate: 600Mbit

min/max network layer size: 60 / 1514

min/max overhead-adjusted size: 60 / 1514

average network hdr offset: 14

Tin 0

thresh 600Mbit

target 5ms

interval 100ms

pk_delay 15us

av_delay 3us

sp_delay 0us

backlog 0b

pkts 1493796

bytes 2145446613

way_inds 325

way_miss 1107

way_cols 0

drops 783

marks 0

ack_drop 0

sp_flows 0

bk_flows 1

un_flows 0

max_len 27252

quantum 1514

root@Willthetech_Home:~# ifstatus wan

Interface wan not found

Could you please post the following:

-

tc -s qdisc show dev eth0 ; echo " " ; tc -s qdisc show dev ifb4eth0 -

run a wafeform speedtest and post the "Share Your Results:" link here and immediately after run (again):

-

tc -s qdisc show dev eth0 ; echo " " ; tc -s qdisc show dev ifb4eth0

I am interested in what pk_delay we will see.

root@Willthetech_Home:~# tc -s qdisc show dev eth0 ; echo " " ; tc -s qdisc show

dev ifb4eth0

qdisc cake 8009: root refcnt 9 bandwidth 40Mbit besteffort triple-isolate nonat nowash no-ack-filter split-gso rtt 100ms raw overhead 0

Sent 281015215 bytes 1664785 pkt (dropped 386, overlimits 1442336 requeues 5)

backlog 0b 0p requeues 5

memory used: 326Kb of 4Mb

capacity estimate: 40Mbit

min/max network layer size: 42 / 1514

min/max overhead-adjusted size: 42 / 1514

average network hdr offset: 14

Tin 0

thresh 40Mbit

target 5ms

interval 100ms

pk_delay 1.42ms

av_delay 118us

sp_delay 1us

backlog 0b

pkts 1665171

bytes 281574897

way_inds 20044

way_miss 14547

way_cols 27

drops 386

marks 0

ack_drop 0

sp_flows 1

bk_flows 1

un_flows 0

max_len 21810

quantum 1220

qdisc ingress ffff: parent ffff:fff1 ----------------

Sent 5331062625 bytes 4326685 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

qdisc cake 800a: root refcnt 2 bandwidth 600Mbit besteffort triple-isolate nonat wash no-ack-filter split-gso rtt 100ms raw overhead 0

Sent 5485700216 bytes 4325899 pkt (dropped 787, overlimits 3001226 requeues 0)

backlog 0b 0p requeues 0

memory used: 13816701b of 15140Kb

capacity estimate: 600Mbit

min/max network layer size: 60 / 1514

min/max overhead-adjusted size: 60 / 1514

average network hdr offset: 14

Tin 0

thresh 600Mbit

target 5ms

interval 100ms

pk_delay 16us

av_delay 4us

sp_delay 0us

backlog 0b

pkts 4326686

bytes 5486888739

way_inds 126700

way_miss 14172

way_cols 0

drops 787

marks 0

ack_drop 0

sp_flows 0

bk_flows 1

un_flows 0

max_len 33308

quantum 1514

root@Willthetech_Home:~# tc -s qdisc show dev eth0 ; echo " " ; tc -s qdisc show

dev ifb4eth0

qdisc cake 8009: root refcnt 9 bandwidth 40Mbit besteffort triple-isolate nonat nowash no-ack-filter split-gso rtt 100ms raw overhead 0

Sent 460497515 bytes 2457170 pkt (dropped 785, overlimits 2668535 requeues 5)

backlog 0b 0p requeues 5

memory used: 326Kb of 4Mb

capacity estimate: 40Mbit

min/max network layer size: 42 / 1514

min/max overhead-adjusted size: 42 / 1514

average network hdr offset: 14

Tin 0

thresh 40Mbit

target 5ms

interval 100ms

pk_delay 1.88ms

av_delay 1.14ms

sp_delay 1us

backlog 0b

pkts 2457955

bytes 461631514

way_inds 20075

way_miss 15076

way_cols 27

drops 785

marks 0

ack_drop 0

sp_flows 0

bk_flows 1

un_flows 0

max_len 21810

quantum 1220

qdisc ingress ffff: parent ffff:fff1 ----------------

Sent 6994534794 bytes 5521497 pkt (dropped 0, overlimits 0 requeues 0)

backlog 0b 0p requeues 0

qdisc cake 800a: root refcnt 2 bandwidth 600Mbit besteffort triple-isolate nonat wash no-ack-filter split-gso rtt 100ms raw overhead 0

Sent 7191556252 bytes 5519395 pkt (dropped 2102, overlimits 4402889 requeues 0)

backlog 0b 0p requeues 0

memory used: 13816701b of 15140Kb

capacity estimate: 600Mbit

min/max network layer size: 60 / 1514

min/max overhead-adjusted size: 60 / 1514

average network hdr offset: 14

Tin 0

thresh 600Mbit

target 5ms

interval 100ms

pk_delay 14us

av_delay 2us

sp_delay 0us

backlog 0b

pkts 5521497

bytes 7194720896

way_inds 138456

way_miss 14685

way_cols 0

drops 2102

marks 0

ack_drop 0

sp_flows 0

bk_flows 1

un_flows 0

max_len 33308

quantum 1514

Odd thing is if I set my bandwidth to 150mbits or below it seems to work great, but anything above that I get some really bad latency. if I change to FQ codel / Simple QOS and I set my bandwidth to 600mbits. I get about 60ms latency, still not as good as I want way better than Cake is giving me at the same speed setting.

Mmmh, so that shows that on ingress/download where you see the attrocious bufferbloat in the waveform speedtest, cake also reports matching high internal sojourntimes... that again makes my think about getting access to CPU issues....

BTW, your netdata plots are more helpful than the cake statistics, simply because the peak delay ages out too quickly (this comes fro the download and the subsequent upload test, basically resets the download peak counter due to the reverse ACK traffic and whatnot else).

I think seeing the output of:

cat /proc/interrupts

might be interesting....

root@Willthetech_Home:~# cat /proc/interrupts

CPU0 CPU1 CPU2 CPU3 CPU4 CPU5 CPU6 CPU7

0: 28 0 0 0 0 0 0 0 IO-APIC 2-edge timer

1: 0 8 0 0 0 0 0 0 IO-APIC 1-edge i8042

4: 0 0 0 15 0 0 0 0 IO-APIC 4-edge ttyS0

8: 0 0 1 0 0 0 0 0 IO-APIC 8-edge rtc0

9: 0 5 0 0 0 0 0 0 IO-APIC 9-fasteoi acpi

12: 9 0 0 0 0 0 0 0 IO-APIC 12-edge i8042

123: 0 0 0 0 0 45 0 0 PCI-MSI 32768-edge i915

124: 0 206 0 0 0 0 2022 0 PCI-MSI 376832-edge ahci[0000:00:17.0]

125: 0 0 0 0 0 0 0 0 PCI-MSI 327680-edge xhci_hcd

126: 1 0 0 0 0 0 0 0 PCI-MSI 2097152-edge eth0

127: 0 845 0 0 1112058 0 0 0 PCI-MSI 2097153-edge eth0-TxRx-0

128: 0 0 148 0 0 0 0 309415 PCI-MSI 2097154-edge eth0-TxRx-1

129: 0 0 0 324732 0 0 0 0 PCI-MSI 2097155-edge eth0-TxRx-2

130: 0 433138 0 0 141 0 0 0 PCI-MSI 2097156-edge eth0-TxRx-3

131: 0 0 0 0 0 593524 0 0 PCI-MSI 2097157-edge eth0-TxRx-4

132: 0 1235432 0 0 0 0 95 0 PCI-MSI 2097158-edge eth0-TxRx-5

133: 0 0 0 0 0 0 655743 59 PCI-MSI 2097159-edge eth0-TxRx-6

134: 73 0 296428 0 0 0 0 0 PCI-MSI 2097160-edge eth0-TxRx-7

135: 0 0 0 0 0 0 0 0 PCI-MSI 2099200-edge eth1

136: 0 3998 0 0 0 0 7 0 PCI-MSI 2099201-edge eth1-TxRx-0

137: 0 0 3998 0 0 0 0 7 PCI-MSI 2099202-edge eth1-TxRx-1

138: 7 0 0 0 0 0 0 3998 PCI-MSI 2099203-edge eth1-TxRx-2

139: 3998 7 0 0 0 0 0 0 PCI-MSI 2099204-edge eth1-TxRx-3

140: 0 0 7 0 0 0 0 3998 PCI-MSI 2099205-edge eth1-TxRx-4

141: 0 0 0 7 0 3998 0 0 PCI-MSI 2099206-edge eth1-TxRx-5

142: 0 0 0 3998 7 0 0 0 PCI-MSI 2099207-edge eth1-TxRx-6

143: 0 0 0 0 0 7 3998 0 PCI-MSI 2099208-edge eth1-TxRx-7

144: 0 0 0 0 0 0 0 0 PCI-MSI 2101248-edge eth2

145: 0 0 0 0 0 3998 0 7 PCI-MSI 2101249-edge eth2-TxRx-0

146: 7 0 3998 0 0 0 0 0 PCI-MSI 2101250-edge eth2-TxRx-1

147: 0 7 0 0 0 0 3998 0 PCI-MSI 2101251-edge eth2-TxRx-2

148: 3998 0 7 0 0 0 0 0 PCI-MSI 2101252-edge eth2-TxRx-3

149: 0 0 0 7 3998 0 0 0 PCI-MSI 2101253-edge eth2-TxRx-4

150: 0 0 0 3998 7 0 0 0 PCI-MSI 2101254-edge eth2-TxRx-5

151: 0 0 0 0 0 7 0 3998 PCI-MSI 2101255-edge eth2-TxRx-6

152: 0 0 3998 0 0 0 7 0 PCI-MSI 2101256-edge eth2-TxRx-7

153: 0 0 0 0 0 0 0 1 PCI-MSI 2103296-edge eth3

154: 194 0 0 0 1140012 0 0 0 PCI-MSI 2103297-edge eth3-TxRx-0

155: 0 202 0 389242 0 0 0 0 PCI-MSI 2103298-edge eth3-TxRx-1

156: 0 0 66 0 0 0 0 395008 PCI-MSI 2103299-edge eth3-TxRx-2

157: 0 362142 0 83 0 0 0 0 PCI-MSI 2103300-edge eth3-TxRx-3

158: 0 0 0 0 92 322859 0 0 PCI-MSI 2103301-edge eth3-TxRx-4

159: 0 0 0 0 0 314669 0 0 PCI-MSI 2103302-edge eth3-TxRx-5

160: 0 0 0 0 0 0 528970 0 PCI-MSI 2103303-edge eth3-TxRx-6

161: 0 0 0 0 0 0 1071147 111 PCI-MSI 2103304-edge eth3-TxRx-7

NMI: 0 0 0 0 0 0 0 0 Non-maskable interrupts

LOC: 3128146 2513398 2687286 2649625 2502015 2488208 2792236 2592385 Local timer interrupts

SPU: 0 0 0 0 0 0 0 0 Spurious interrupts

PMI: 0 0 0 0 0 0 0 0 Performance monitoring interrupts

IWI: 0 0 0 0 0 0 0 0 IRQ work interrupts

RTR: 5 0 0 0 0 0 0 0 APIC ICR read retries

RES: 3002 2670 1826 2934 2664 2977 3349 2110 Rescheduling interrupts

CAL: 1229458 1100057 638168 655226 682061 832889 680377 578411 Function call interrupts

TLB: 188 178 212 153 279 165 294 133 TLB shootdowns

TRM: 0 0 0 0 0 0 0 0 Thermal event interrupts

THR: 0 0 0 0 0 0 0 0 Threshold APIC interrupts

DFR: 0 0 0 0 0 0 0 0 Deferred Error APIC interrupts

MCE: 0 0 0 0 0 0 0 0 Machine check exceptions

MCP: 26 27 27 27 27 27 27 27 Machine check polls

ERR: 4

MIS: 0

PIN: 0 0 0 0 0 0 0 0 Posted-interrupt notification event

NPI: 0 0 0 0 0 0 0 0 Nested posted-interrupt event

PIW: 0 0 0 0 0 0 0 0 Posted-interrupt wakeup event

@WilR I don’t know if this will help, but for reference I have a 400/20mbps cable connection. I actually get more like 480/24mbps. Regardless, here is the SQM config I use:

root@OpenWrt:~# cat /etc/config/sqm

config queue

option interface 'eth0'

option debug_logging '0'

option verbosity '5'

option qdisc 'cake'

option linklayer 'none'

option qdisc_advanced '1'

option squash_dscp '0'

option squash_ingress '1'

option ingress_ecn 'ECN'

option egress_ecn 'ECN'

option qdisc_really_really_advanced '1'

option iqdisc_opts 'docsis dual-dsthost nat ingress'

option eqdisc_opts 'docsis dual-srchost nat ack-filter'

option enabled '1'

option upload '24500'

option download '462500'

option script 'ctinfo_4layercake.qos'

I wonder if you might be willing to drop in this config and give it a test. Obviously you’ll want to modify the upload, download, and interface to match your setup. But I’m just curious what your experience will be with this known good config.

Notice that my linklayer option is “none”, however that’s because I specify “docsis” in the advanced config options.

You can create of backup of your current SQM config in case this doesn’t give positive results.

done

OpenWrt 21.02.1, r16325-88151b8303

-----------------------------------------------------

root@Willthetech_Home:~# cat /etc/config/sqm

config queue

option interface 'eth0'

option debug_logging '0'

option verbosity '5'

option qdisc 'cake'

option linklayer 'none'

option qdisc_advanced '1'

option squash_dscp '0'

option squash_ingress '1'

option ingress_ecn 'ECN'

option egress_ecn 'ECN'

option qdisc_really_really_advanced '1'

option iqdisc_opts 'docsis dual-dsthost nat ingress'

option eqdisc_opts 'docsis dual-srchost nat ack-filter'

option enabled '1'

option upload '40000'

option download '600000'

option script 'ctinfo_4layercake.qos'

root@Willthetech_Home:~#

It seems like is not limiting my speeds..also my upload got worse.

Would you mind dropping the output from your tc -s qdisc show dev eth0 ; echo " " ; tc -s qdisc show dev ifb4eth0 command here again?

Mmmh this looks unsuspicious to me, thanks. Next stop powertop to see what the core frequencies do.

I understand that you like qosify over sqm (which is fine) but here, both really are just tools to instantiate cake on ingress and/or egress, after they are done setting things up all that is left is identical. So I am not sure how switching to qosify is going to change anything substantial?

Could you post links to the actual test results please, so we can see more information about latency samples under the three different test regimes, please?\

I also agree with @_FailSafe that getting the ts -s qdisc output from just after a speedtest will be helpful.