This happened on poweroff

I post a fixup patch for this issue

You should probably mail your patch to the mailing list for better visibility or by creating a pull request. Or perhaps @nbd might be willing to have a look here  Thank you very much for the patch by the way!

Thank you very much for the patch by the way!

Hi we have a problem with enabled flow offload and wireguard on mt7621. I hope you can solve this issue and not a bug in wireguard.

To reproduce the bug enable flow offload and then try to transfer data through wireguard, then thhe router will reboot instantly. i couldn't get log from this. Bu somebody did some debuging and shared a call trace here

One interesting thing I have noticed - I can add the iptables rule with:

iptables -I FORWARD 1 -m conntrack --ctstate RELATED,ESTABLISHED -j FLOWOFFLOAD

iptables-save

However, when I restart my device, the rule no longer appears and I have to re-add it. I am new to iptables so I may be doing something wrong, but has anyone else seen/solved this?

I could add it to my startup scripts, but I would think saving it should keep it across reboots.

Device: WRT3200ACM (mvebu cortex a9)

Snapshot: Around 4/26 (not near my router right now so I can't pull the exact commit/build), from trunk

It is working great though, CPU's around 10% when maxing out my connection at 130mbps down.

You can add that to:

/etc/firewall.user

or easier to do as described here if on an image with that commit.

Since I am not too familiar with UCI, would it be something like the following based on the commit you linked?:

uci add firewall.defaults.flow_offloading='1'

uci commit firewall

/etc/init.d/firewall restart

Thanks for the help.

Almost right, use uci set firewall.@defaults[0].flow_offloading=1; uci commit firewall

That worked and I verified that after restarting it is still active. Thanks!

So I have been thinking. When benchmarking my Dir-860l by connecting a PC to the WAN port and LAN port and running an iperf3 test, I can see the following results:

-WAN <-> LAN: 940 mbit (same speed as 2 devices on the same switch, hence running into gigabit ethernet limitations)

-WAN <-> LAN with SQM: 650-700 mbit

However, with my real connection I am using a PPPoE connection on the WAN side instead of IPoE. This is giving me the following speed:

-WAN <-> LAN: 500 mbit down (my connection speed), 400 mbit up (100 mbit below my connection speed) and very low CPU idle %, showing I am CPU limited.

-WAN <-> LAN: 350-400 mbit in either direction with SQM enabled.

However, when enabling hw flow offload I am seeing:

-WAN <-> LAN: 500 mbit in either direction (99% CPU idle)

-WAN <-> LAN: N/A. Not possible to use SQM with hw flow offloading

Conclusions:

- My dir-860l is able to shape 650-700 mbit

- PPPoE is a severe bottleneck, unless hw flow offload is enabled

- But hw flow offload doesn't work with SQM

Would it be possible to:

- Apply hw flow offload on WAN <-> dummy interface. This makes sure the CPU intensive PPPoE is fully offloaded.

- Process dummy interface <-> LAN without hw flow offload, but keep software flow offload enabled. Should be fine to do this in software given my synthetic benchmarks and given that part 1) hardly costs any CPU cycles.

- Apply SQM on the dummy interface.

So basically, I have 3 questions:

- Would this be possible?

- If so, how do I apply hw flow offloading to some parts, but not all. And use software flow offloading for another part?

- How do I make the traffic follow: WAN <-> dummy interface <-> LAN.

Sorry for my ramblings

Are more people still seeing this issue? Unfortunately, I can only use Wireguard or hw flow offloading. Not both.

I opened a ticket on flyspray for that issue. If you have the ame vote for it so it will be reviewed faster.https://bugs.openwrt.org/index.php?do=details&task_id=1539

I am running into another bug with hw flow offload it seems. Could anyone please verify/deny whether they are seeing the same thing?:

Usually, when nothing is really happening on my home network, there is around ~100-200 active connections as can be seen in the Luci overview page. However, with hw flow offload enabled I started seeing thousands of active connections. Diving into the connections tab in Luci's Realtime Graphs, I could see hundreds of connections made by a computer that shutdown over 12 hours ago. For some reason, inactive connections are not timing out and left in the conntrack table. Disabling hw flow offload fixes the issue.

Sounds familiar for anyone else? @nbd is there any additional information that I can provide to help debugging the issue?

Edit: Running the 18.06 branch from a few days ago: OpenWrt 18.06-SNAPSHOT r6917-8948a78

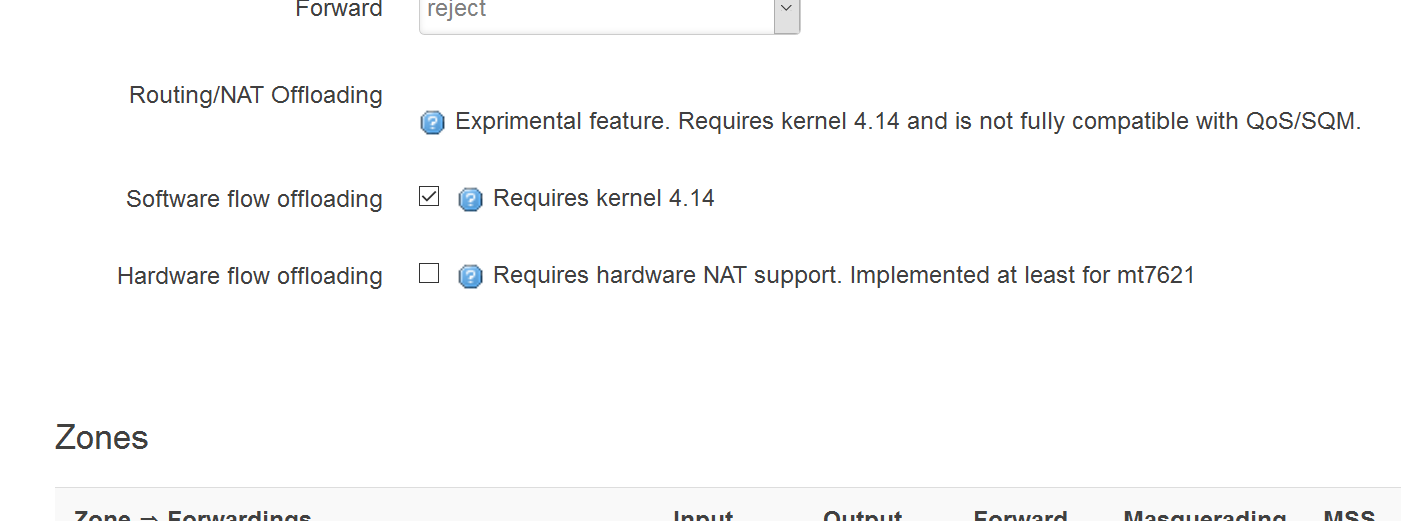

I wrote a short addition to LuCI for controlling the offloading options in the firewall config page:

In case somebody wants to patch a live router, the file is:

/usr/lib/lua/luci/model/cbi/firewall/zones.lua

Screenshot:

Neat! Will this end up in the repository as well?

As you can see from above, it currently a pull request in LuCI, as I want some feedback first, before committing it.

But it should end up into Luci master (and 18.06) if no negative feedback comes.

i'm seeing this on master 7044. 7500 connections open after a few hours.

same problem. Flow offload cann't work together with wireguard. My target is bcm53xx.

Merged into master and 18.06

Using a snapshot from master because snapshots from 18.06 fail to boot on my R7500v2 and I'm glad to report I'm seeing a 15% bandwidth increase from enabling flowoffload while SQM is enabled without any higher bufferbloat penalty. SQM and flowoffload in SOFTWARE mode work well together and -again- I'm glad I have an alternative to the Qualcomm NSS/rfs/etc. on my device which just doesn't work with OpenWRT.