I have no idea what you're saying, but you're the first to log a nss core reboot

I think you are correct, console log messages wasn't enabled... I'll enable it now.

Any errors I get I will post in the ipq806x-nss-drivers thread. Hopefully Quarky or Ansuel can help us.

client 1 -> eth1.1 -> bridge -> wan (hairpin) -> bridge -> eth1.1 -> client 1

@quarky regarding this, I noticed when setting echo 1 > /sys/class/net/br-lan/brif/eth1.1/hairpin_mode the forwarded port is actually open but no data is received and ethernet traffic becomes very laggy....

Actually you should not set hairpin mode for eth1.1, unless one of your switch port bridged to br-lan connected to more than one subnet and br-lan is routing between those networks.

I would have thot the issue would be purely firewall configuration?

You want your LAN clients to be able to access your router’s WAN IP which is forwarded back to a LAN server?

^ Yes, this

It WORKS if br-lan is in promisc mode... but br-lan in promisc mode makes the router unstable so I want to avoid that.

EDIT:

Setting hairpin like i said makes the router go nuts and eat all CPU.

I'm going to try br-lan in promisc mode AND using the performance governor, it might be a frequency scaling issue after all...

@xeonpj Btw, if it is a CPU scaling issue that's causing the reboots, you could just try

echo performance > /sys/devices/system/cpu/cpufreq/policy0/scaling_governor

echo performance > /sys/devices/system/cpu/cpufreq/policy1/scaling_governor

It makes the router about 2C hotter but it seems more stable also, and of course more responsive since it's running at max frequency all the time.

i did it mate i copied your local rc

I have tested for a while NSS build and, besides of slightly worse bufferbloat marks, there is significant difference with LAN Ethernet performance:

NSS

[ ID] Interval Transfer Bitrate Retr Cwnd

[ 5] 0.00-1.00 sec 48.0 MBytes 403 Mbits/sec 5 1.26 MBytes

[ 5] 1.00-2.00 sec 51.2 MBytes 430 Mbits/sec 0 1.41 MBytes

[ 5] 2.00-3.00 sec 48.8 MBytes 409 Mbits/sec 0 1.52 MBytes

[ 5] 3.00-4.00 sec 46.2 MBytes 388 Mbits/sec 0 1.61 MBytes

[ 5] 4.00-5.00 sec 47.5 MBytes 398 Mbits/sec 0 1.68 MBytes

[ 5] 5.00-6.00 sec 40.0 MBytes 336 Mbits/sec 136 1.23 MBytes

[ 5] 6.00-7.00 sec 31.2 MBytes 262 Mbits/sec 48 950 KBytes

[ 5] 7.00-8.00 sec 43.8 MBytes 367 Mbits/sec 20 714 KBytes

[ 5] 8.00-9.00 sec 58.8 MBytes 493 Mbits/sec 0 771 KBytes

[ 5] 9.00-10.00 sec 51.2 MBytes 430 Mbits/sec 33 597 KBytes

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate Retr

[ 5] 0.00-10.00 sec 467 MBytes 392 Mbits/sec 242 sender

[ 5] 0.00-10.00 sec 464 MBytes 389 Mbits/sec receiver

vs

no-NSS

[ ID] Interval Transfer Bitrate Retr Cwnd

[ 5] 0.00-1.00 sec 73.4 MBytes 615 Mbits/sec 0 1.79 MBytes

[ 5] 1.00-2.00 sec 73.8 MBytes 619 Mbits/sec 0 1.79 MBytes

[ 5] 2.00-3.00 sec 80.0 MBytes 671 Mbits/sec 0 1.79 MBytes

[ 5] 3.00-4.00 sec 78.8 MBytes 661 Mbits/sec 0 1.79 MBytes

[ 5] 4.00-5.00 sec 53.8 MBytes 451 Mbits/sec 0 1.79 MBytes

[ 5] 5.00-6.00 sec 78.8 MBytes 661 Mbits/sec 0 1.79 MBytes

[ 5] 6.00-7.00 sec 81.2 MBytes 682 Mbits/sec 0 1.79 MBytes

[ 5] 7.00-8.00 sec 78.8 MBytes 661 Mbits/sec 0 1.79 MBytes

[ 5] 8.00-9.00 sec 78.8 MBytes 661 Mbits/sec 0 1.79 MBytes

[ 5] 9.00-10.00 sec 80.0 MBytes 671 Mbits/sec 0 1.79 MBytes

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate Retr

[ 5] 0.00-10.00 sec 757 MBytes 635 Mbits/sec 0 sender

[ 5] 0.00-10.02 sec 756 MBytes 633 Mbits/sec receiver

What might be the reason for such a difference?

something is wrong with lan in NSS, there are several things that cause problems, possibly when the experts find the cause, everything will work perfectly

Sorry for the dumb question, but is there any benefit to running this NSS build vs a standard build (e.g. hnyman) when your ISP connection is only 100Mbit?

Those are odd results. I get upper 500mbps / low 600mbps wireless, full line speed wired on LAN.

What is your client / server setup look like and what iperf settings are you using?

@zabolots honestly with a 100mbps ISP speed you are better off with standard OpenWrt. I’d run cake sqm at that speed and enjoy excellent latency for your speed. My build is more ideal for 500mbps+ speeds for squeezing out every bit of performance for faster internet connections.

I was testing from one R7800 (AP mode) to R7800 router and only over copper. For iperf3 options nothing fancy: iperf3 -s and iperf3 -c hostname.

R7800s will run out of processor if they are the client or the server. The router or the AP should only be the “in between”, never the server or receiver for iperf at these speeds (r7800’s work great for much slower speeds for iperf, but not maxing out line rate or maxing out 2x2 wifi 5 wireless connection, the r7800 cpu falls flat on its face and can’t keep up).

I’d test using two wired PCs for wired, a wired PC and a wireless client for testing wireless. Looks like this for a wired PC as the iperf server, iphone 13 as the wifi client with a r7800 in between:

ath10k-ct, 5.10 Kernel with NSS Hardware Offloading

[SUM] 0.00-30.01 sec 2.30 GBytes 659 Mbits/sec receiver

[SUM] 0.00-30.01 sec 1.99 GBytes 569 Mbits/sec 189 sender

ath10k (OpenWrt with no offloading)

[SUM] 0.00-30.01 sec 1.60 GBytes 459 Mbits/sec receiver

[SUM] 0.00-30.01 sec 1.14 GBytes 326 Mbits/sec 699 sender

ath10k-ct (OpenWrt with no offloading)

[SUM] 0.00-30.01 sec 1.53 GBytes 437 Mbits/sec receiver

[SUM] 0.00-30.01 sec 1.21 GBytes 347 Mbits/sec 763 sender

I've done some more tests and apparently 'worse' performance can be seen only when testing from access point (non-NSS) towards NSS enabled router. Even in opposite direction it is wirespeed. Therefore no issue.

I waited a few days, no change. So I filed a bug report. I haven't found a similar issue, I can't imagine I'd be the only one if this is truly a typo in the package coding that runs into this the past week(s). Last change on OpenWRT is from February 28 2021. So the coding error may very well be in the upstream GNU release of findutils?

haven't you tried ath10k with offloading? are you using 80MHz or 160MHz? because with plain ath10k i can't get any advantage (on the opposite, i get less performance) with 160MHz channel width, but even with 80MHz it's not bad at all (uptime over 14 days)

this 21.02 is so damn good..

I haven’t tried ath10k with NSS offloading in a couple months (I’ve been running ath10k-ct) Performance between ath10k and ath10k-ct are similar for my clients so I just run -ct.

Those are great results! Next time I build I’ll do some iperf testing with normal ath10k to have those results included.

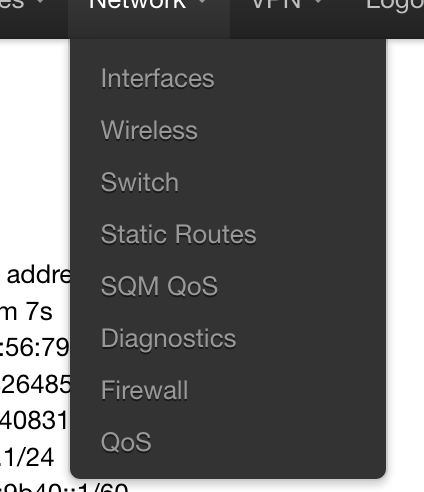

Sry maby stupid question bur where is in network luci dhcp and dns ?