There is no one at this time on the network, only me.

The only thing has changed is I had to change the switch to make it work at 1Gb

I think it's time to go back to the previous firmware (22.03) that worked perfectly for me, do tests, upload them and from there decide which firmware and with which modifications to install to continue improving. @Mpilon has said it better than me.

I stopped using irqbalance, and had another incompatible feature enabled ... Without those running 5.10 -based code is solid.

I did have a stall and reboot trying to test 5.15

Not sure if you’ve noticed it, but I’m running a master build without NSS but with Ansuels 5.15 fixes for this problem. I had 2 random reboots since last Monday when I flashed that build to test the fixes. He got a clue where to look for. I run this test without packet steering and without irqbalance. I posted the ramoops files to the PR.

The firmware he is using is different the one i use.

Mine is base on @qosmio 5.15-qsdk11 and the @vochong's one is base on @qosmio 5.15-qsdk11-new-krait-cc

Qosmio 5.15-qsdk11-new-krait-cc (with irqbalance sent into oblivion) works great for me. NSS fq_codel works well too. I built it with non-ct ath10k firmware v157.

Great work @Qosmio!

git branch

* 5.15-qsdk11-new-krait-cc

uname -a

Linux America 5.15.69 #0 SMP Thu Sep 22 21:03:12 2022 armv7l

# lsmod | grep nss

nss_ifb 16384 0

ppp_generic 40960 6 ecm,pptp,ppp_async,qca_nss_pppoe,pppoe,pppox

pppoe 24576 2 ecm,qca_nss_pppoe

qca_nss_drv 581632 5 nss_ifb,ecm,mac80211,qca_nss_qdisc,qca_nss_pppoe

qca_nss_gmac 65536 1 qca_nss_drv

qca_nss_pppoe 16384 0

qca_nss_qdisc 114688 5

My image based on Qosmio 5.15-qsdk11-new-krait-cc also has all of Ansuel's latest changes for 5.15. I happened to see the RCU stalling-related reboot (with irqbalance enabled) when I was just looking at the router's console.

I’m glad it worked out!

Side note: I HIGHLY discourage using irqbalance for this platform. It’s overkill, and quite frankly causes more harm than good. Two things that are sure to cause oops are moving adm_dma, and the nss cores too often. It’s better to just pin them after boot. I have this in my /etc/rc.local and has served me well since @quarky started the NSS builds on 4.4. This should work in either NSS/non-NSS builds.

# move nss cores to cpu1 and cpu2

i=1

awk '$7=="nss"{gsub(":","");print $1,$7}' /proc/interrupts| while read num irq; do

echo $i > /proc/irq/$num/smp_affinity

i=$((i+1))

done

# move rpm, and usb to cpu2

awk '$7~/qcom_rpm_ack|xhci-hcd/{gsub(":","");print $1,$7}' /proc/interrupts| while read num irq; do

echo 2 > /proc/irq/$num/smp_affinity

done

did you get qca-nss-clients built?

It still trows an error.

Before compiling I get this

WARNING: Makefile 'package/feeds/nss/qca-nss-clients/Makefile' has a dependency on 'kmod-qca-nat46', which does not exist

At the end I get these errors.

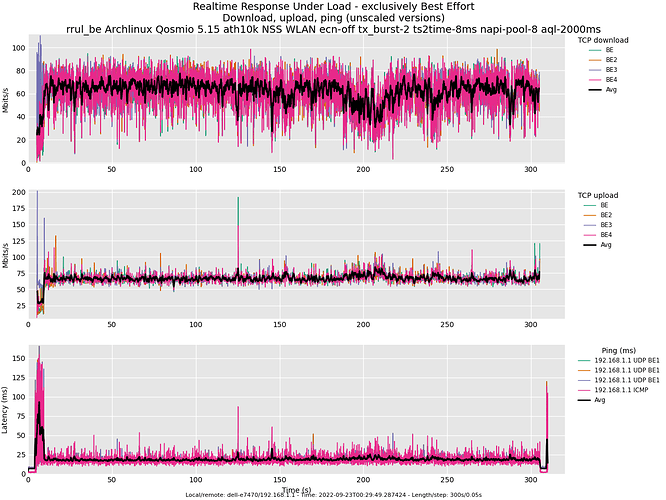

That's a very good result! Roughly equal sharing up/down & latency below 20ms after the initial load spike from TCP's slow start. That might be mitigatable somewhat with cake gso-split on the server.

Another interesting test is to start that and walk slowly away from the AP. The bandwidth should go down, and latency stay fairly flat, until other factors like the rate controller start gasping for the righter rate and additional other factors like hardware retries start showing up.

To be OCD, the load spike from slow start shouldn't have been that bad, and I'm not convinced we are FQing enough at a low enough level.

The proof of the pudding is another client on the network doing the same test and not observing more than a few ms of extra load or spike when this client starts up.

Looks nice and flat, steady with very “small” sawtooth edges. What’s your current Internet connection like in speed? Mine just got upgraded today to 1/1 Gbit/s. Would it be interesting to see NSS fq_codel at those speeds? Speedtest with wired gives me about 850Mbit/s with 5.10 without these latest adjustments. Like @Mpilon said; make a baseline test first, then flash a build with the latest and greatest and repeat the same test.

I wanted to point out that the black line is an average of four flows, so to get the real bandwidth, multiply by four. There are 20+ other plots in the flent-gui, and sometimes looking at a totals plot is helpful, and my favorite method - after looking at this level of detail for any anomalies - are using the cdf plots to combine test runs - via add->other files - so as not to be misled. Having a good "database" of what good runs looked like, and the causes of bad runs, would be nice, and so I encourage folk to share their flent.gz files in the hope that one day we'll be able to train an AI to look at a result and say, "Hmm.... looks like you have a 100Mbit interface on that test series...."

Anyway, the 160, 80, 20mhz test on a 100mbit interface show a result that may or may not be interesting - in the 160 case the wifi should not be a bottleneck on the down at all, but TSQ on the client up should have held observed local latencies below 6ms not 10ms, and I'm suspecting that's the switch doing the extra buffering at this rate!

A straight tcp_ndown test, on 100mbit ethernet, over 160mhz wifi, should show ping latencies below 2ms. If it isn't, slapping cake gso-split on the server's interface might help (at this 100mbit) as by default the linux tcp stack makes too many big TSO packets nowadays for fq_codel to cope with properly.

At 20Mhz the bottleneck has shifted to the wifi and the behavior in fq_codel, there dominates.

A --socket-stats tcp_nup test from this client (which I assume is hooked to the ap->1gbit switch->100mbit interface accumulates packets in both wifi and the switches' buffer. I don't have any good data on how much buffering modern cheap switches do, I assume it's getting bigger and bigger. A way to test that assumption and size the switch's buffer would be to set the server to 10Mbit and do that tcp_nup. A way to "fix" switch overbuffering that is to rate limit the port to the actual rate of the port via cake and a vlan. (lest you think this is excessively fixing a non-problem, ethernet over powerline rarely achieves 100mbit, and has insane (seconds) buffering, and it's best to measure those devices and rate limit them via cake)

So nice to see ya "getting" what a good sawtooth looks like. thx!

one thing (as per your suggested test at a gbit on your new link) is that the sawtooths get deeper at higher rates, so getting used to how the test "evolves" when the rates go up kind of helps also.

There are a lot of people that don't "like" it when, for example, on a single flow, cubic gets a drop and cuts the rate by 1/3 or tcp reno, by a half, and then only slowly, grows back, wondering, where did my "1gbit" go? Why did I only get 830Mbits on this short test? They then push the buffering to where they see a gbit for a single flow and declare victory, not realizing they just broke congestion control for more than one flow and wrecked the videoconferencing and gaming performance.

Speedtest, this test, and most others, use a bunch of flows, that disguise the bandwidth changes in the sawtooth (tho rrul shows it clearly), and can actually lead to better results with overly short buffering than is desirable. Underbuffering is increasingly a problem at +1gbit. And so it goes...

You may want to update your feeds properly and "make clean" before trying to build again.

make[3] -C feeds/packages/net/vnstat compile

make[3] -C feeds/luci/applications/luci-app-wireguard compile

make[3] -C feeds/nss/qca-nss-clients compile <====

make[3] -C package/network/utils/iproute2 compile

make[3] -C feeds/packages/utils/lvm2 compile

make[3] -C feeds/packages/utils/ntfs-3g compile

I built successfully with nss pppoe but I did not actually test its actual functionality because my ISP does not use PPPoE. I'm too lazy to set up PPPoE server to test it locally like I did before for Tishipp patches.

It was done very late at night. I'm sure there was only one WIFI client sending/receiving the traffic during the test.

I am now compiling a new kernel based on the 5.15-new-krait-cc branch. no errors so far. but got a warning:

"Not overriding core package 'nat46' ; use -f to force"

I have graphics of version 22.03 that I installed 10 hours ago that I will upload later to compare them with the ones I published yesterday.

Right now I'm in a video conference ![]() (advantages of being the boss) and I can't publish the data but I anticipate that the latency problem seems to come from the ax210 client

(advantages of being the boss) and I can't publish the data but I anticipate that the latency problem seems to come from the ax210 client

oh well i don't know what properly means more than the common

./scripts/feeds update -a

./scripts/feeds install -a

and what can be cleaner that starting from scratch every time, but let me try with today's @qosmio code, i think i can build only the pppoe package..

Hello,

i tried this command after:

- cloning your repo

- feeds update and install

- .config setup

but i fear i missed something, since i get an error very soon..

massi@greenbook:~/rutto/qosmio/openwrt-ip806x$ make package/{qca-nss-drv,qca-nss-clients,qca-nss-ecm}/{clean,compile} V=sc -j$(nproc)

make[2]: Entering directory '/home/massi/rutto/qosmio/openwrt-ip806x/scripts/config'

make[2]: 'conf' is up to date.

make[2]: Leaving directory '/home/massi/rutto/qosmio/openwrt-ip806x/scripts/config'

time: target/linux/prereq#0.19#0.03#0.20

make[1]: Entering directory '/home/massi/rutto/qosmio/openwrt-ip806x'

make[2]: Entering directory '/home/massi/rutto/qosmio/openwrt-ip806x/feeds/nss/qca-nss-drv'

rm -rf /home/massi/rutto/qosmio/openwrt-ip806x/build_dir/target-arm_cortex-a15+neon-vfpv4_musl_eabi/linux-ipq806x_generic/qca-nss-drv-2020-03-20-3cfb9f43

rm -f /home/massi/rutto/qosmio/openwrt-ip806x/staging_dir/target-arm_cortex-a15+neon-vfpv4_musl_eabi/stamp/.qca-nss-drv_installed

rm -f /home/massi/rutto/qosmio/openwrt-ip806x/staging_dir/target-arm_cortex-a15+neon-vfpv4_musl_eabi/packages/qca-nss-drv.list

make[2]: Leaving directory '/home/massi/rutto/qosmio/openwrt-ip806x/feeds/nss/qca-nss-drv'

time: package/feeds/nss/qca-nss-drv/clean#0.21#0.03#0.21

make[1]: Leaving directory '/home/massi/rutto/qosmio/openwrt-ip806x'

make[2]: Entering directory '/home/massi/rutto/qosmio/openwrt-ip806x/scripts/config'

make[2]: 'conf' is up to date.

make[2]: Leaving directory '/home/massi/rutto/qosmio/openwrt-ip806x/scripts/config'

make[1]: Entering directory '/home/massi/rutto/qosmio/openwrt-ip806x'

make[2]: Entering directory '/home/massi/rutto/qosmio/openwrt-ip806x/feeds/nss/qca-nss-gmac'

make[2]: *** No rule to make target '/home/massi/rutto/qosmio/openwrt-ip806x/build_dir/target-arm_cortex-a15+neon-vfpv4_musl_eabi/linux-ipq806x_generic/linux-5.15.69/.config', needed by '/home/massi/rutto/qosmio/openwrt-ip806x/build_dir/target-arm_cortex-a15+neon-vfpv4_musl_eabi/linux-ipq806x_generic/qca-nss-gmac-2021-04-20-17176794/.built'. Stop.

make[2]: Leaving directory '/home/massi/rutto/qosmio/openwrt-ip806x/feeds/nss/qca-nss-gmac'

time: package/feeds/nss/qca-nss-gmac/compile#0.24#0.03#0.23

ERROR: package/feeds/nss/qca-nss-gmac failed to build.

make[1]: *** [package/Makefile:116: package/feeds/nss/qca-nss-gmac/compile] Error 1

make[1]: Leaving directory '/home/massi/rutto/qosmio/openwrt-ip806x'

make: *** [/home/massi/rutto/qosmio/openwrt-ip806x/include/toplevel.mk:231: package/qca-nss-drv/compile] Error 2