Can you upload your settings please?

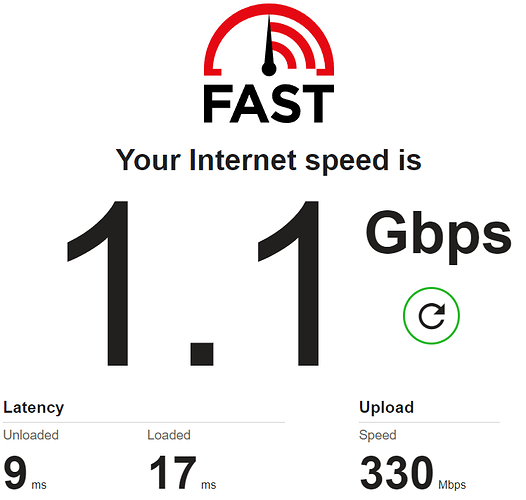

I am getting 938 Mbps:

Test Complete. Summary Results:

[ ID] Interval Transfer Bandwidth

[ 4] 0.00-28.65 sec 533 MBytes 156 Mbits/sec sender

[ 4] 0.00-28.65 sec 0.00 Bytes 0.00 bits/sec receiver

[ 6] 0.00-28.65 sec 518 MBytes 152 Mbits/sec sender

[ 6] 0.00-28.65 sec 0.00 Bytes 0.00 bits/sec receiver

[ 8] 0.00-28.65 sec 307 MBytes 89.9 Mbits/sec sender

[ 8] 0.00-28.65 sec 0.00 Bytes 0.00 bits/sec receiver

[ 10] 0.00-28.65 sec 287 MBytes 84.0 Mbits/sec sender

[ 10] 0.00-28.65 sec 0.00 Bytes 0.00 bits/sec receiver

[ 12] 0.00-28.65 sec 269 MBytes 78.9 Mbits/sec sender

[ 12] 0.00-28.65 sec 0.00 Bytes 0.00 bits/sec receiver

[ 14] 0.00-28.65 sec 248 MBytes 72.5 Mbits/sec sender

[ 14] 0.00-28.65 sec 0.00 Bytes 0.00 bits/sec receiver

[ 16] 0.00-28.65 sec 258 MBytes 75.5 Mbits/sec sender

[ 16] 0.00-28.65 sec 0.00 Bytes 0.00 bits/sec receiver

[ 18] 0.00-28.65 sec 274 MBytes 80.4 Mbits/sec sender

[ 18] 0.00-28.65 sec 0.00 Bytes 0.00 bits/sec receiver

[ 20] 0.00-28.65 sec 225 MBytes 65.8 Mbits/sec sender

[ 20] 0.00-28.65 sec 0.00 Bytes 0.00 bits/sec receiver

[ 22] 0.00-28.65 sec 286 MBytes 83.8 Mbits/sec sender

[ 22] 0.00-28.65 sec 0.00 Bytes 0.00 bits/sec receiver

[SUM] 0.00-28.65 sec 3.13 GBytes 938 Mbits/sec sender

[SUM] 0.00-28.65 sec 0.00 Bytes 0.00 bits/sec receiver

I am using quite a synthetic method to see the highest throughput. I am using iperf3 sending 10 parallel data streams from an iperf3 server in WAN and a Windows 11 laptop connected wirelessly using 160MHz channel. While I am listening to online radio with low buffer in another PC connected to the E8450 wirelessly too. This is pretty much the performance out of the box, as I am not yed decided on what SSL to use and I am testing performance with different sets. The test was done using openssl, but I am getting the same results using wolfssl.

This is the list of custom packages I used in imagebuilder:

nano-plus htop ncdu iperf3 irqbalance auc ca-certificates -wpad-basic-wolfssl wpad-wolfssl openvpn-wolfssl luci-ssl -wpad-basic-wolfssl -libustream-wolfssl -px5g-wolfssl wpad-openssl libustream-openssl luci-ssl-openssl luci luci-compat luci-mod-dashboard luci-app-attendedsysupgrade luci-app-vnstat2 luci-app-nlbwmon luci-app-adblock luci-app-banip luci-app-bcp38 luci-app-commands luci-app-ddns ddns-scripts-noip luci-app-openvpn -luci-ssl-openssl luci-app-sqm luci-app-wireguard luci-app-upnp luci-app-uhttpd luci-app-statistics collectd-mod-conntrack collectd-mod-cpu collectd-mod-cpufreq collectd-mod-dhcpleases collectd-mod-entropy collectd-mod-exec collectd-mod-interface collectd-mod-iwinfo collectd-mod-load collectd-mod-memory collectd-mod-network collectd-mod-ping collectd-mod-rrdtool collectd-mod-sqm collectd-mod-thermal collectd-mod-uptime collectd-mod-wireless blockd cryptsetup e2fsprogs f2fs-tools kmod-fs-exfat kmod-fs-ext4 kmod-fs-f2fs kmod-fs-hfs kmod-fs-hfsplus kmod-fs-msdos kmod-fs-nfs kmod-fs-nfs-common kmod-fs-nfs-v3 kmod-fs-nfs-v4 kmod-fs-vfat kmod-nls-base kmod-nls-cp1250 kmod-nls-cp437 kmod-nls-cp850 kmod-nls-iso8859-1 kmod-nls-iso8859-15 kmod-nls-utf8 kmod-usb-storage kmod-usb-storage-uas libblkid ntfs-3g nfs-utils ip6tables-mod-nat 6in4 6rd 6to4 ip6tables-nft

@YesNO please think through the superb and carefully crafted advice by @elan above, which will have taken time to put together.

How would that change if I throw a VPN in the mix? If I try to maximize OpenVPN (client) or Wireguard speed on this router, should I enable flow offloading?

fq_codel isn't that "obsolete" that it requires bolding (especially in this context).

Although cake is hyped here, it is not perfect for all situations, as it may try a bit too much classification and is thus too CPU intensive as the highest speeds, as you implicate.

For Gigabit speeds the SQM simple.qos/fq-codel (or simplest.qos) may offer the needed QoS but be much lighter for the CPU.

Fully agree with that ![]()

The measured top speed is pretty irrelevant for most users, as the real-life internet traffic will never reach that except for really short bursts (or the speedtests).

Lol, nah. I've just paid £90 for this after people saying this is the recommended router to get on IRC. it's crap, can't reach anywhere near 1gbps speeds and the latency is a joke!

So one of cake's goals was to make setting up competent AQM for novice users simple (reducing the need and complexity of set-up scripts like sqm-script), IMHO it mostly suceeded.

The other big goal however was reducing the CPU cycle cost of the often required traffic shaper, and that part did not suceed, in the end cake is even more CPU hungry than HTB+fq_codel, in fairness it also does more. But doing more is not helpful when CPU cycles are scarce

As it stands neither is obsolete and as clear a win over the other as fq_codel was over single queue codel.

No. Quote ALL of what I said, not just a bit.

People over on Reddit and IRC are recommending this router. I have no idea why, you can't reach 1gbps with it and even if you use fq_codel, your latency is crap!

I might as well go back to my old router, at least I could hit 1gbps with it. lmao

This is WITHOUT the CAKE script running so NO fq_codel: https://www.waveform.com/tools/bufferbloat?test-id=cf9da65d-5e2b-4202-bfab-b4a12d2ae7c1

What a joke of a system!!!!!!

i have a 1Gbps/500Mbps fiber connection and a MT7622 based router, like the RT3200. i don't have any particular setting in my router, no sqm, only hadware and software offloading enabled.

i do my test on fast.com , and i don't see any problem at all ....

Great that your are taking things with humor...

The point is low-latency traffic shaping is quite CPU demanding (not so much throughput, but low-delay), and the actual load depends on packet size, and the available CPU cycles depend on how much other work a router needs to do. Traffic shaping @1Gbps is quite a lot of work even with maximum sized packets (1538 for ethernet):

1000*1000^2/((1500+38)*8) = 81274.4 pps

or

1000/(1000*1000^2/((1500+38)*8)) = 0.012304 milliseconds per packet...

IMHO traffic shaping @~1Gbps is possible with a few consumer-grade non-x86 routers, but typically there are little reserves for the unexpected, so depending on what else a router does you will fail to achieve the ~1Gbps throughput.

For example my turris omnia, when streamlined to only do minimal other chores (like no wifi) and with all configuration tricks I managed to come up with (manually adjusted packet-Steering) allowed bidirectionally saturating traffic shaping at 550/550 Mbps (or unidirectional 1 Gbps), but adding a few more services degraded that shaping performance considerably (my access link is only running at 116/37 Mbps, so even with other duties traffic shaping with cake is no problem).

I guess the issue here is to come up with the correct expectations, since 1 Gbps ethernet interfaces have become ubiquitous and many cheap routers manage to do NAT/PPPoE/firewalling at 1 Gbps rates one intuitively assumes that network processing at 1Gbps to be a piece of cake. Once one realises that OEM firmware often only achieve throughput close to 1 Gbps by employing accelerators (which often are very specialized and only accelerate, say PPPoE, under very specific conditions and not generically, think unencrypted PPPoE running like a bat out of hell, while encrypted PPPoE (I do not know of any ISP actually using that, so this is a thought experiment) probably would be punted to the routers main CPU and hence achieve considerably less throughput) the main CPUs of those routers often are not up to the task of doing much at 1 Gbps.... Traffic shaping however typically is not something offered by those accelerators, so sqm/cake do not profit of thse and hence running cake/sqm will expose a router's raw CPU capabilities, which often are not as high as expected.

And for good reason, as far as I can tell it is the only/best-supported WiFi6 router under OpenWrt...

Well, we can have a look there if you want. I would need the output of:

ifstatus wancat /etc/config/sqmtc -s qdisctc -d qdisc

as well as the link to a result of a dslreports speedtest, configured like this (please note the dslreports speedtest is somewhat in decline, but it still offers a few unique pieces of information).

It's not something we support in our drivers (afaik) and it will require quite specific tc setup (ie. using specific queuing disciplines), but it can actually be done in hardware by many common router SoCs including the MT7622:

HW QoS: Seamlessly co-work with HW NAT engine, SFQ w/ 1k queues

64 hardware queues to guarantee the min/max bandwidth of each flow

Is it something that could be supported in the future? Does this include the specific SOC, MT7622BV, in the RT3200?

Afaik all MT7622 variants should support that feature.

To support it in future OpenWrt someone would need to write a tc-offloading driver. The infrastructure for this is already present in the kernel, so it's probably not terribly hard to implement this. Afaik nobody is working on that in the moment, also no idea if and how it is implemented in MediaTek SDK kernel.

I guess for egress it would be enough to just offload the actual traffic shaper and use BQL to create back pressure into an normal kernel qdisc. For ingress however, I am not sure that would work and we would need to move the whole qdisc into the accelerator (like for the NSS cores in the r7800). All of this is, however, far outside my area of expertise... my personal solution is to use a primary router with a sufficiently powerful CPU for the required traffic shaping needs (often a raspberry pi4b will do or one of the alternative ARM based SBCs with 2 ethernet ports), and use something like the E8450/rt3200 as AP, but I understand that this is not really as attractive as having a single WiFi-router that "does it all".

ALL I changed was my DHCP DNS address to 8.8.8.8 and 8.8.4.4 and: https://www.waveform.com/tools/bufferbloat?test-id=d8d170fc-c293-422c-93e2-d9f3f46b3d44

I think I found a bug

I went up 50mbps in kbits and went all the way to 1950. up to 950 I was getting a latency below +10 - above that it would go to around +90......

But, if I go back to 950, do a test with the buffer bloat site, it would hit +90 but if I add an 0 then apply then remove the 0 - it would be under +10

Well different DN-server can direct you to different nodes in cloudflare's network and these can be loaded differently. This might indicate that throughput and latency for the wavefront test might not be limited by your own router...

It would be interesting to see:

- output of

tc -s qdisc(copy and paste the output as 'Preformatted Text') - run a speedtest and post the results here (copy and paste the result link)

- immediately after the speedtest finishes run

tc -a qdiscagain (copy and paste the output as 'Preformatted Text')

Possible, but still inconclusive as it is not clear whether wavefront/cloudflare works well for you, you can realistically only address the bufferbloat on your access link, but the wavefront tests will report the aggregate bufferbloat including bloat on segments of the path outside of your home network.