I don't feel like wading through these specs today, or even this month, but I hope someone does.

It's pretty close with Raptor. IIRC you can make blocks of up to about 8k symbols, and there's not really limits I know of on the symbol size except being a multiple of 64 bytes and (if you're building multicast this way) fitting inside a UDP packet. So if you pick symbol size at 1280 you can encode up to a 10MB block, and the limit of repair symbols you can generate is something like 56k symbols IIRC. If you make your symbols (and block size) smaller, you can put more symbols into a single packet, which can reduce how much extra you need.

If you're missing some source data, the number of repair symbols you need to rebuild your block is probabilistic, I think it starts with needing at least 2 extra symbols, so if your source was 7000 symbols, you need at least 7002 total to attempt a decode with something like 98% chance of success, and it passes 99.9% at I think 5 extra symbols. You can decide as sender how much redundancy you want to provide, so if you're anticipating a network loss of up to 1%, you can run it with 2% redundancy on your repair and have plenty of extra. You can probably get away with 1.01% redundancy or so from sender to cover a steady 1% loss, but how much you provide just depends how tight you want to run it.

From the wikipedia article: " For example, with the latest generation of Raptor codes, the RaptorQ codes, the chance of decoding failure when k encoding symbols have been received is less than 1%, and the chance of decoding failure when k+2 encoding symbols have been received is less than one in a million."

so basically you always decode it with k+2 and you really really always decode it with k+3

Any more Blue Sky?

A network browser gui. Identify machines and the services they are offering. Click on machines and set QoS preferences for various purposes.

A GUI queue hierarchy constructor. Let people set up HFSC with 4 classes and qfq below it and fq_codel below that etc. And set up nftables rules and tx filters to classify things with GUI point and click

A high end wizard that sets up recommended network segmentations, main LAN, business LAN, DMZ, iot, kids subnet etc

https://reproducible-builds.org/reports/2021-11/ I like diffoscope. I am having great difficulty trusting anything anymore.

@dlakelan - can I get you to think outside your box and about what your grandmother, or your local coffee shop, or a small business, would like?

A coffee shop or small business wants to boot up the thing and enter http://myrouter.lan which is written on the box, then it has 4 buttons to push, one that says "I'm a coffee shop offering public access" one that says "I'm a small business with no public access" one that says "I'm a small business with remote workers" and one that says "I'm a network professional take me to the full version"

After clicking the appropriate button they are asked a few minimal questions like "what's the name of your business?" And "what do you want to call your public wifi" a screen should appear that shows them randomly generated passwords and network config information that they can snapshot with their cell phone... And then they never need to look at it again and can put it on a shelf underneath the espresso machine near the bags of coffee beans

(Not that this is really possible, just that it's what they want)

Consider that these shops often employ people that don't know the difference between an IP address, a domain name, a URL, an ISP, WiFi vs "the internet". It's extremely challenging to help these people in any way other than just setting things up and making opinionated decisions on their behalf. I'm not in any way belittling these people, they're just not knowledgeable in the way that I don't know a thing about say getting good coloration while tie dying tshirts or weaving native style reed baskets

There's a standard for a QR code for phones and tablets to acquire WiFi connection details. If this could be popped up on a screen (phone, PC), with some means to print it, would that help the small business owner?

I am happy openwrt has support for 240/4 and 0/8 now. I am not sure if the gui lets you use 0/8 at this time, and certainly figuring out the uses for these new ranges is lagging in the ietf.

Also:

A month or two back, I took enormous flack for also helping propose we reduce 127/8 to 127/16, and not enough folk took a look at what I regard as a more valid proposal, which is making the "Zeroth" or "lowest" address generally usable against various cidr netmasks and finally retiring BSD 4.2 backward compatability. I think this latter feature is rather desirable especially for those with a /30 in giving you 3 (rather than 2) usable ipv4 addresses.

In none of these cases did we actually propose use cases for them, merely proposing they move from "reserved" to "unicast" status. I would hope the use case for "lowest" would be obvious (failover redundancy, monitoring tools).

Now, donning my fire retardant suit, there's the possibilities of opening up 127 for "other stuff". https://datatracker.ietf.org/doc/draft-schoen-intarea-unicast-127/ is the first ietf draft in this area, and again, all it suggests is we reallocate these for unicast outside of localhost.

To try and forstall the flack somewhat, I had several use cases for using stuff above 127/16 for something... but please read the draft? One was for use by vms and containers on a single machine, extending the notion of "localhost" to mean "stuff on the host". IPv4 is still, in general, cheaper than ipv6, offloads do not help for local services on the router, and the everlasting maze of rfc1918 style ipv4 addresses in kubernetes has to be debugged to be believed. (multiple firewalls, multiple layers of nat)

Longer term I kind of think that the notion of a wider localhost than ::1 for ipv6 makes sense also in this context.

In any case, having a good place to see what breaks if we fiddle with these archiac allocations is something that a cerowrt was good for..... and I really do miss the relative simplicity of firewalling that was in cerowall, a lot.

I really don't understand those efforts, 127.0.0.0/8 in particular (the others to lesser degrees), the time it would take (even if accepted, which I can't imagine) to phase out (update) the critical infrastructure to accept these IP ranges (and especially in terms of 127.0.0.0/8 pretty much every device in existence has that range hardcoded) will be measured in decades. In this regard it would be indeed more likely to go IPv6-only within that time (the whole stack, internet backbone to every CPE, desktop, phone, IoT device will have to be replaced anyways, no reason not to go IPv6 at the same time), than to risk using 127.123.245.0/24 for anything.

--

Totally ignoring the security aspects, actual usage of these ranges in deployed software, and the expectable misrouted traffic hitting those addresses for even longer.

Yes, reserving 127.0.0.0/8 for lopback purposes is a waste, but that ship had sailed in the early eighties, late eighties at the latest.

Seriously we really really need a strong economic incentive to push for Ipv6 everywhere. Infrastructure bill that required all ISPs to pay back whatever they got from the govt if they don't offer native /56 to everyone and /48 to anyone who sends a request, including all business contracts by Jan 1 2023 for example. And a $200/mo/address tax on the use of globally routable IPv4 addresses by businesses after Jan 1 2023... In the US we have ~ 50% of consumer traffic going ipv6 already, just tip it the rest of the way with carrot and stick.

Seriously, it's easier and cheaper to use ipv6 only networks than it is to keep ipv4 networks going except when there's some ancient piece of software that no one has updated in years but runs your billing department or whatever.

So much laziness in the networking world around not learning anything about ipv6

It would be useful to have an OpenWrt build that has no Ipv4 configured on LANs by default, and runs Tayga and DNS64 on the router so people could plug it in and get a testing network without much work.

Yeah, people should pick their battles. Even the massive 240/4 (aka "class E") would probably be easier to implement, and would only have bought 18 months of growth back when it was first proposed. And as it turns out, delay benefits no one: it just makes it easier for people to believe they have that much longer not to do anything.

(Aside: in my role as lead SW developer for a Fortune 100 tech giant I will leave nameless, I fought this battle many times: the insistence of QC and dev analysts to put in a requirement that the application would not permit the use of "reserved" IP addresses. I spent entire meetings convincing them that it is not our job to police network allocations and that it was the customer's job to know their IP addresses, not our job to tell them. I generally won these arguments but it was ridiculously hard work, wasted lots of time and made it very clear to me why thousands of other companies did the wrong thing.)

The -in comparison- trivial case of the DoD IP ranges can serve as example, a totally valid IP range - still blocked and abused by large- and small for invalid purposes (e.g. transit networks for big ISPs who should know better) all around the world. That's not going to change anytime soon either, making those IP ranges burnt to quite some degree as well, even though there -other than for the ranges mentioned here- wouldn't be any reason for that.

Making 0/8 "just work" involved removing the check for it. It saved a few nanoseconds, and has been in linux and openwrt for several years now

240/4 has basically worked for over a decade. The only thing missing from openwrt and linux was correct support for ifconfig which went in a few years ago.

In both cases, most of the world of iot already works with both these ipv4 address ranges, (because they never checked for it in the first place, only checking for multicast), while most of that same world still lacks ipv6 support.

When last I looked the growth pattern for ipv6 had it finally winning by 2135 or so. Also, the most eloquent argument made for why a new ipv4 address space would last for longer than 18 months is that there is a functioning market for existing ipv4 address spaces now. Secondly universal connectivity for a newer address space is not needed, at the outset, and I enjoy the thought of 240/4 being immune to the all the windows borne worms out there (because that's the only os that 240 doesn't work on).

Note: I have worked very hard on ipv6, but I think that ipv4 will co-exist with it indefinitely.

I would like very much for someone to attempt a "6wrt" ripping out all ipv4 functionality by default and see how far you get. I'd also rather like to see ipv6 evolve towards better meeting the needs of small business and the home - that especially includes things like "pcp", quality (m)dns, source specific routing for RA, and a productive dialog with end users to make ipv6 an ever more compelling argument over ipv4. But ipv4 + nat allows for permissionless innovation, where being slaves to your ISP's ipv6 address distribution policy is an ongoing headache, still.

@slh I would rather like to see the DoD ranges put up for sale also, given current market prices we are talking billions of dollars, and the funds used by the governments to enable the shift to ipv4+nat and/or ipv6.

in the 6wrt case there are also some very old protocols (like tftp) used in early boot that need to be upgraded to ipv6 also.

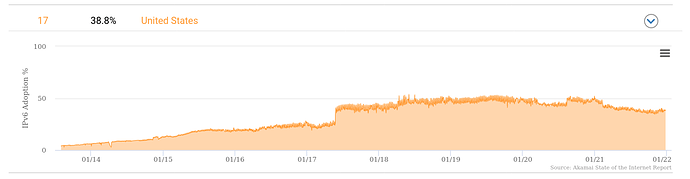

ipv6 goes in fits and starts. ISPs finally "throw the switch" and the "instantaneous growth rate" is extremely high. That step function in 2017 below in the Akamai graph was I think Comcast or Spectrum turning on Ipv6

If we charge end users a $20/mo tax for ipv4 on their accounts or they can use NAT64/DNS64 without the tax consumers will bail on ipv4 and when consumers bail on it, software providers will get their act together to support ipv6 (game mfg in particular would be an important category).