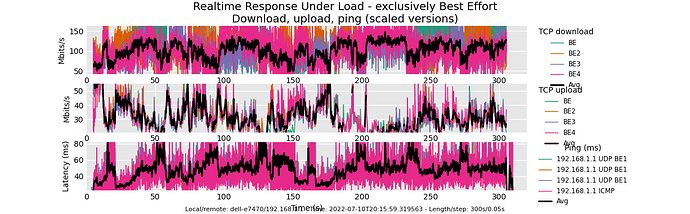

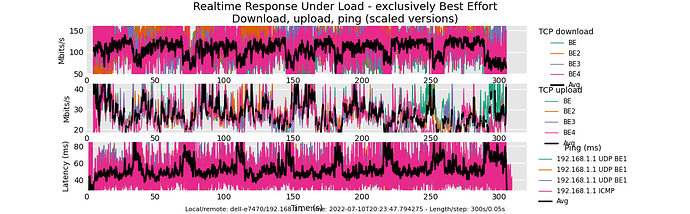

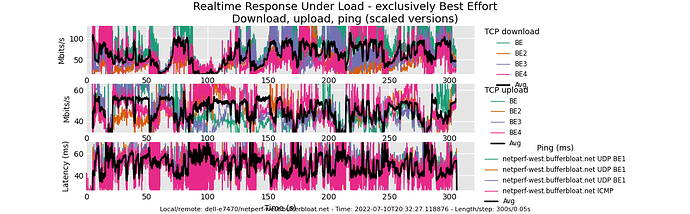

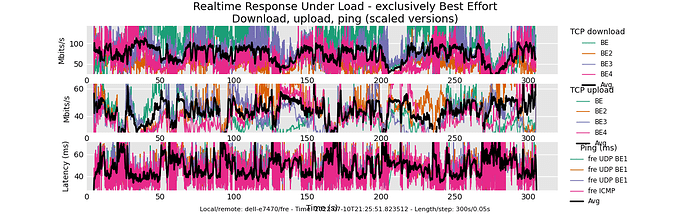

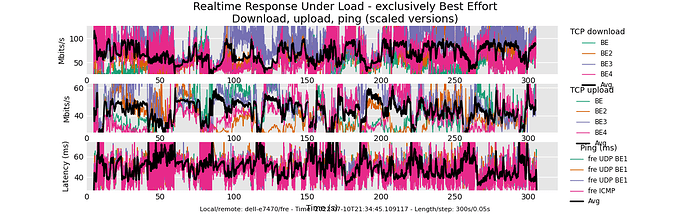

I ran the RRUL BE tests against 3 servers. The test was repeated twice for each server. The router R7800 runs 22.03.0 RC5 image with NSS acceleration and without SQM enabled. ISP D/L speed ~ 650 Mbps / 220 Mbps. Router and client are about 50ft away from each other, with 3 dry walls in between.

- Locally running on R7800 (Performance governor, NSS accelerated, no SQM)

- netperf-west.bufferbloat.net

- flent-fremont.bufferbloat.net

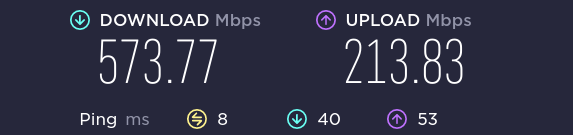

SPEEDTEST (same client location as when running flent)

SERVER: locally running on the router (R7800, performance governor)

SERVER: netperf-west.bufferbloat.net

SERVER: flent-fremont.bufferbloat.net