Well, it's quite challenging to port U-boot as there aren't really any existing drivers that can be reused.

I only pushed driver changes to parse the label property and set that as the interface name only half an hour ago, but I did not set them in the DTS so far.

Ah so the changes in d43ed79

No, there are no changes.

I just pushed support for NSS DP to even read the name from label property if set in DTS like an hour ago:

From 358b93e40d0c6b6d381fe0e9d2a63c45a10321b3 Mon Sep 17 00:00:00 2001

From: Robert Marko <robimarko@gmail.com>

Date: Sun, 4 Dec 2022 18:41:36 +0100

Subject: [PATCH] nss-dp: allow setting netdev name from DTS

Allow reading the desired netdev name from DTS like DSA allows and then

set it as the netdev name during registration.

If label is not defined, simply fallback to kernel ethN enumeration.

Signed-off-by: Robert Marko <robimarko@gmail.com>

---

nss_dp_main.c | 17 ++++++++++++++---

1 file changed, 14 insertions(+), 3 deletions(-)

diff --git a/nss_dp_main.c b/nss_dp_main.c

index 18e1088..19e14fb 100644

--- a/nss_dp_main.c

+++ b/nss_dp_main.c

@@ -685,18 +685,29 @@ static int32_t nss_dp_probe(struct platform_device *pdev)

show original

But, have not changed it on any boards at all.

1 Like

Cypher1

January 7, 2023, 10:08pm

482

Can't seem to get past 2.3 Gbits/s on the latest build. 100% usage on single core.

iperf3 -c 192.168.1.1

Connecting to host 192.168.1.1, port 5201

[ 4] local 192.168.1.201 port 9752 connected to 192.168.1.1 port 5201

[ ID] Interval Transfer Bandwidth

[ 4] 0.00-1.00 sec 263 MBytes 2.21 Gbits/sec

[ 4] 1.00-2.00 sec 274 MBytes 2.29 Gbits/sec

[ 4] 2.00-3.00 sec 273 MBytes 2.29 Gbits/sec

[ 4] 3.00-4.00 sec 276 MBytes 2.32 Gbits/sec

[ 4] 4.00-5.00 sec 275 MBytes 2.31 Gbits/sec

[ 4] 5.00-6.00 sec 274 MBytes 2.30 Gbits/sec

[ 4] 6.00-7.00 sec 275 MBytes 2.31 Gbits/sec

[ 4] 7.00-8.00 sec 272 MBytes 2.29 Gbits/sec

[ 4] 8.00-9.00 sec 273 MBytes 2.29 Gbits/sec

[ 4] 9.00-10.00 sec 275 MBytes 2.31 Gbits/sec

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bandwidth

[ 4] 0.00-10.00 sec 2.67 GBytes 2.29 Gbits/sec sender

[ 4] 0.00-10.00 sec 2.67 GBytes 2.29 Gbits/sec receiver

1 Like

sqrwv

January 9, 2023, 12:01am

483

iperf3 that you run inside the router is single threaded, it can't run in the other cores, that will be always a limitation in the test you are doing.

Enable SW flow offloading and Packet Steering, then reboot the router for Packet Steering to take effect.

Also in your test try, iperf3 -c 192.168.1.1 -R.

PS: I don't have this router, so I don't know what speed to expect.

Cypher1

January 10, 2023, 6:48am

484

Were you able to solve this issue? Tested 8000Mbit/s with stock firmware. 2000Mbit/s direct from router. Plugged into 10G port to a Asus 10G NIC and cannot get full speed. SW Offloading and Packet steering are both on.

Considering there is no offloading you are not gonna get close to stock FW

1 Like

can you share the output of ethtool 10g-2 (I presume this is the port that is bridged to br-lan and that you used to connect to your asus 10g nic)?

second point - i can see the load mostly on cpu0 ... try irqbalance or/and otherwise move some of the interrupts to the other cpu's ...

on 2 1g ports (i am using mwan3) I get the 1.2Mb so surprised as you you are not getting more even without HW offloading...

Ping: 17 ms.

Jitter: 8 ms.

Determine line type (2) ........................

Fiber / Lan line type detected: profile selected fiber

Testing download speed (32) ................................................................................................................................................................................................................................................................................................................................

Download: 1211.70 Mbit/s

Testing upload speed (12) ....................................................................................

Upload: 54.50 Mbit/s

alternatively you could try adding to your own build the qca-nss-drv that brings some optimisations without HW offloading (NSS-ECM). My personal tests with NSS-ECM haven't shown noticeable improvements though but this is most likely to do with my own use case

Cypher1

January 11, 2023, 2:54am

488

root@OpenWrt:~# ethtool 10g-2

Settings for 10g-2:

Supported ports: [ ]

Supported link modes: 100baseT/Half 100baseT/Full

1000baseT/Full

10000baseT/Full

1000baseKX/Full

10000baseKX4/Full

10000baseKR/Full

2500baseT/Full

5000baseT/Full

Supported pause frame use: Symmetric Receive-only

Supports auto-negotiation: Yes

Supported FEC modes: Not reported

Advertised link modes: 100baseT/Half 100baseT/Full

1000baseT/Full

10000baseT/Full

1000baseKX/Full

10000baseKX4/Full

10000baseKR/Full

Advertised pause frame use: Symmetric Receive-only

Advertised auto-negotiation: Yes

Advertised FEC modes: Not reported

Link partner advertised link modes: 100baseT/Full

1000baseT/Full

10000baseT/Full

2500baseT/Full

5000baseT/Full

Link partner advertised pause frame use: No

Link partner advertised auto-negotiation: Yes

Link partner advertised FEC modes: Not reported

Speed: 10000Mb/s

Duplex: Full

Auto-negotiation: on

Port: Twisted Pair

PHYAD: 0

Transceiver: external

MDI-X: Unknown

Link detected: yes

irqbalance enabled and not working. I'll look into NSS-ECM.

root@OpenWrt:~# speedtest-netperf.sh -H netperf-west.bufferbloat.net

2023-01-11 02:41:56 Starting speedtest for 60 seconds per transfer session.

Measure speed to netperf-west.bufferbloat.net (IPv4) while pinging gstatic.com.

Download and upload sessions are sequential, each with 5 simultaneous streams.

............................................................

Download: 2100.57 Mbps

Latency: [in msec, 0 pings, 0.00% packet loss]

CPU Load: [in % busy (avg +/- std dev) @ avg frequency, 57 samples]

cpu0: 97.4 +/- 0.0 @ 2203 MHz

cpu1: 19.0 +/- 1.7 @ 2198 MHz

cpu2: 20.1 +/- 2.2 @ 2203 MHz

cpu3: 18.3 +/- 2.4 @ 2198 MHz

Overhead: [in % used of total CPU available]

netperf: 13.9

............................................................

Upload: 1371.50 Mbps

Latency: [in msec, 0 pings, 0.00% packet loss]

CPU Load: [in % busy (avg +/- std dev) @ avg frequency, 57 samples]

cpu0: 36.2 +/- 4.1 @ 1361 MHz

cpu1: 31.3 +/- 5.4 @ 1428 MHz

cpu2: 16.9 +/- 3.8 @ 1437 MHz

cpu3: 14.5 +/- 3.5 @ 1526 MHz

Overhead: [in % used of total CPU available]

netperf: 8.7

No, I am still stuck at <400mbit/s to WAN, even though my local link rates are ~2gbit/s. Unsure what it is with this device.

That is crazy low, even with PPoE you should be getting 800+ with NAT

Running a wan speed test on the qnap itself yields pretty close to the expected speeds, but any devices connected to it are capped. Not a super crazy config; mostly just straight NAT.

It seems to derive from advertising 2.5G on 10g wan with

ethtool -s 10g-2 advertise 18000000E102C

Hm, that is really, really weird.cat /sys/kernel/debug/clk/clk_summary

Cypher1

January 14, 2023, 6:55pm

495

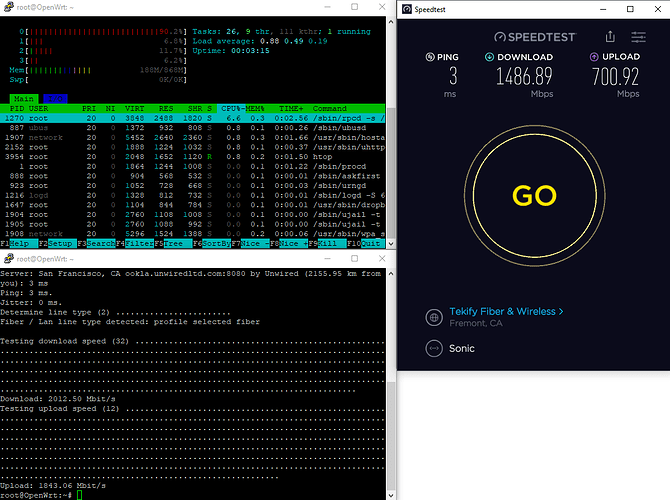

Not sure why my post above was flagged but I have all my speed problem fixed with a build from some chinese developers. Looks like they have the flow offloading working. Full 8000 mbit/s when testing from computer.

Advertising random chinese builds as the solution isn't really well-liked as that is not OpenWrt

3 Likes

Can you please post the more information on the other build? Is it on github?

Also looking at the screenshots and doing some googling, looks like we just need qca-nss-ecm added to the firmware which appears to be on github and in some other openwrt builds already. I could be wrong but looks promising.

"Just" is a bit of an understatement

I assume that is isn't easy like adding software in the firmware under the software tab?